Eon Systems successfully closes the sensorimotor loop, driving a physically simulated fruit fly using a biologically accurate whole-brain connectome.

- A Historic Qualitative Threshold: For the first time, a complete, connectome-derived whole-brain emulation (over 125,000 neurons) has been successfully embedded into a physics-simulated body to produce multiple naturalistic behaviors.

- Driven by Biology, Not Just Algorithms: Unlike AI that relies on reinforcement learning to mimic movement, this model utilizes a simple “leaky integrate-and-fire” system built from the actual electron microscopy data, synaptic connections, and neurotransmitter identities of a Drosophila fruit fly.

- The Path to Mammalian Emulation: With the sensorimotor loop completely closed in a fly, the hurdle for emulating a 70-million-neuron mouse brain—and eventually a human brain—shifts from a question of fundamental possibility to a question of scale.

For decades, the concept of the Singularity has belonged almost exclusively to the realm of artificial intelligence—minds built from the ground up using code and synthetic architectures. But the tantalizing counterpart to AI has always been whole-brain emulation: the idea that if we could copy a biological brain, neuron by neuron and synapse by synapse, we could actually run it. Today, that theoretical milestone has become a demonstrated reality. Eon Systems PBC has unveiled what is believed to be the world’s first embodiment of a whole-brain emulation capable of producing multiple, distinct behaviors. The ghost is no longer in the machine; the machine is becoming the ghost.

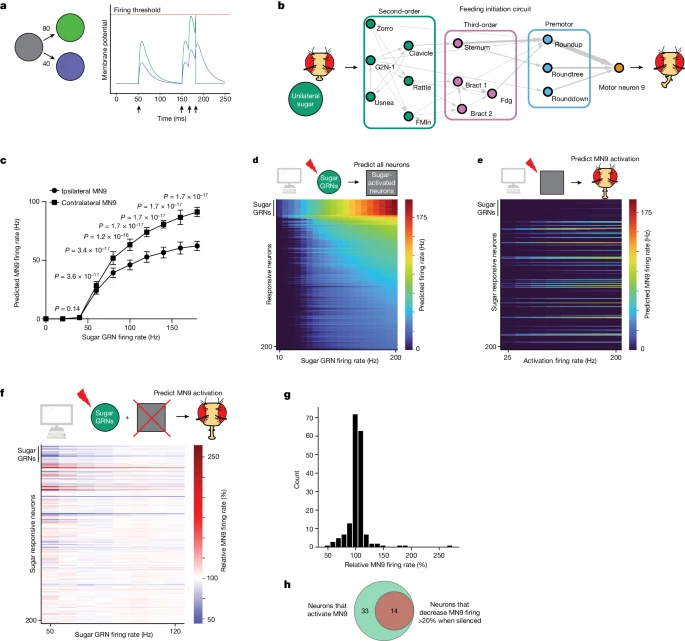

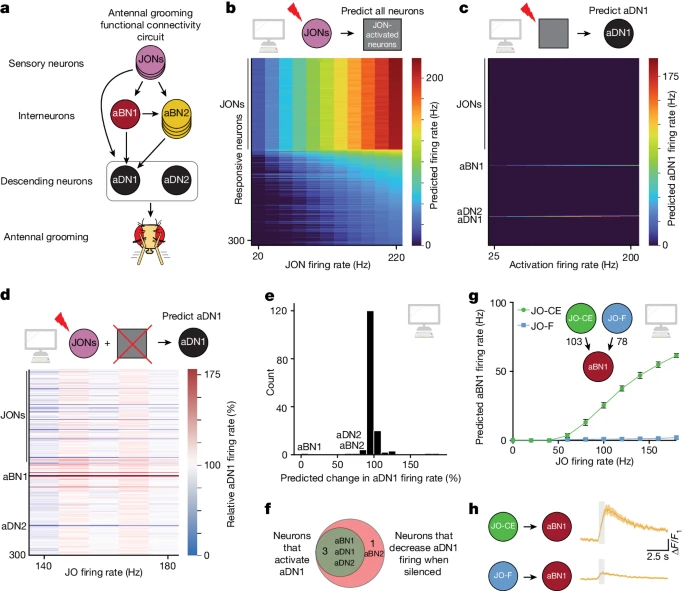

The foundation of this breakthrough rests on a monumental mapping of the adult Drosophila melanogaster (fruit fly) central brain connectome. As detailed in a 2024 Nature publication by Eon senior scientist Philip Shiu and collaborators, this brain template contains more than 125,000 neurons and a staggering 50 million synaptic connections. Because a single fly neuron can connect to hundreds of downstream targets, interpreting how this dense web generates behavior is incredibly complex. To solve this, the researchers built a computational model using FlyWire connectome data and machine learning predictions of neurotransmitter identities.

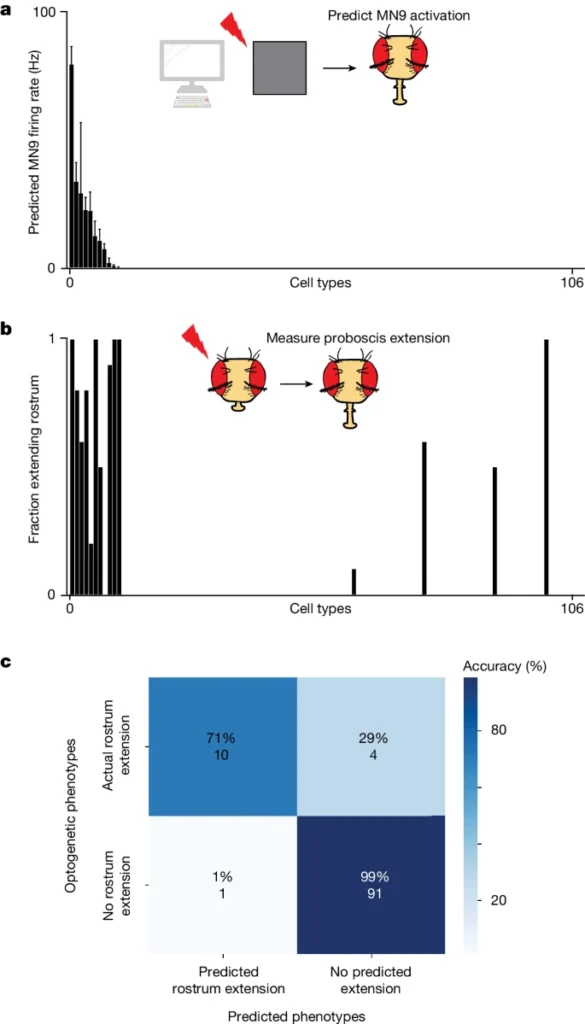

At the core of this virtual brain is a “leaky integrate-and-fire” computational model. In this system, when an upstream neuron spikes, it alters the membrane potential of downstream neurons in exact proportion to the physical connectivity weights mapped from the real fly. If a downstream neuron’s potential hits a specific threshold, it fires. Even when entirely disembodied, this model was able to predict motor behavior with 95% accuracy. But a brain without a physical form is just activation without physics—motor outputs with nowhere to go.

Now, that brain finally has a body. Building upon Shiu’s whole-brain model, the NeuroMechFly v2 embodied simulation framework, and research by Özdil et al. on centralized brain networks, Eon Systems has integrated the emulated brain with a physics-simulated fly body within the MuJoCo engine. This combination closes the loop from perception to action. When sensory input flows in, neural activity propagates through the complete connectome, and motor commands flow out to dynamically drive a physically simulated body.

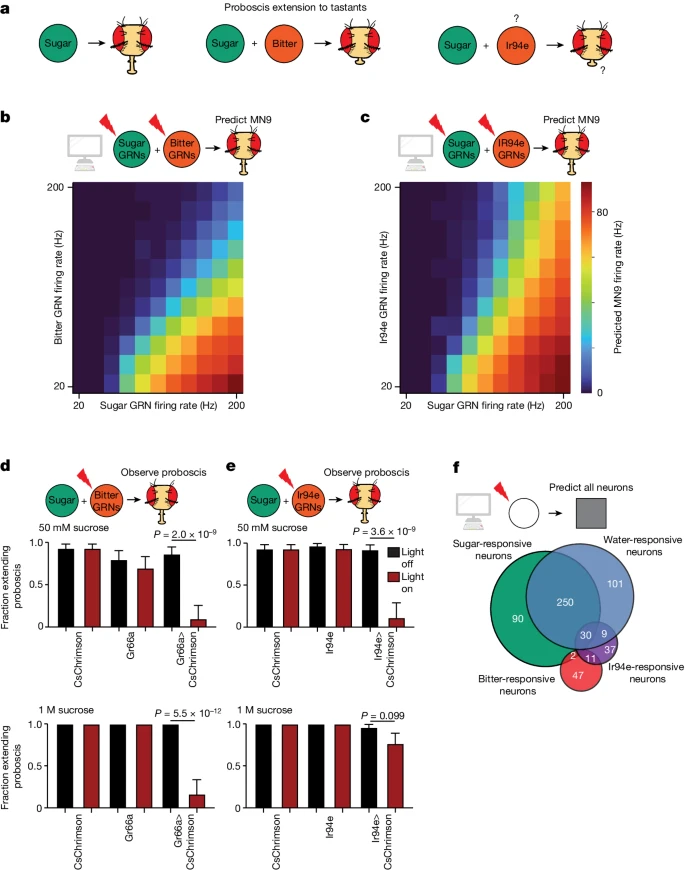

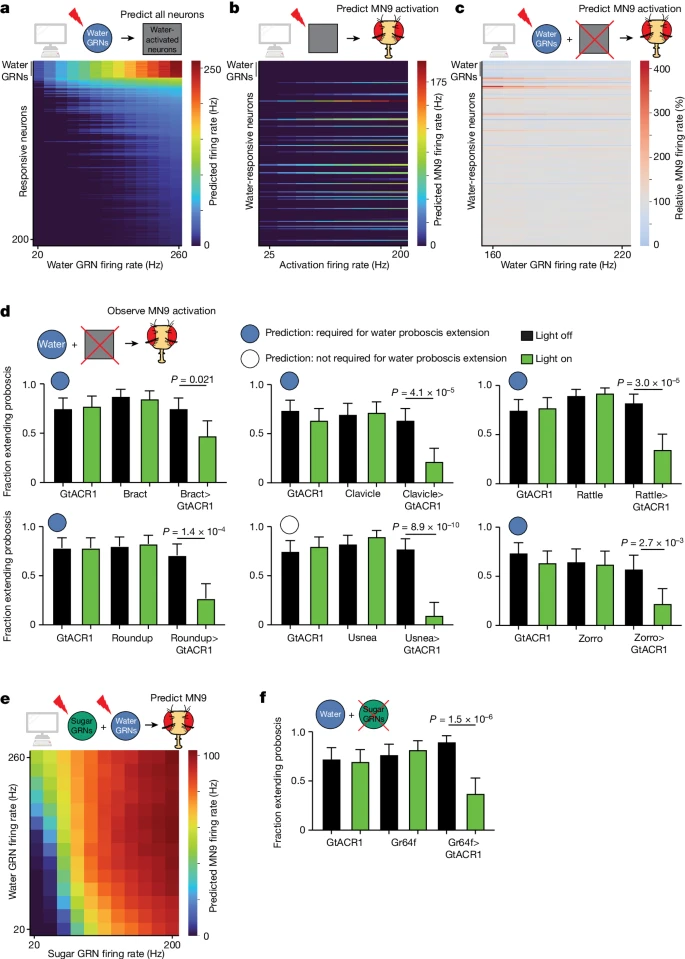

This embodiment allows researchers to observe complete sensorimotor transformations. For example, by computationally activating sugar-sensing or water-sensing gustatory neurons within the model, the system accurately predicts the neurons required for feeding initiation and those that elicit motor neuron firing. These predictions were rigorously validated against living subjects using optogenetic activation. Similarly, activating mechanosensory neurons in the simulation successfully predicted the activation of the antennal grooming circuit. By modeling circuits using nothing but synapse-level connectivity and neurotransmitter data, the team generated accurate, testable hypotheses about how complex taste and touch modalities interact.

It is crucial to understand that this represents a fundamental qualitative threshold, not merely an incremental step. Prior work in this space has either modeled brains without bodies or animated bodies without biologically accurate brains. DeepMind and Janelia’s recent MuJoCo fly, for instance, relied on reinforcement learning—an AI policy mimicking biology—rather than connectome-derived neural dynamics. Conversely, projects like OpenWorm have attempted true embodiment, but were limited by the microscopic nervous system of C. elegans (roughly 302 neurons) and its restricted behavioral repertoire. No one has previously demonstrated a complete emulated brain driving a physically simulated body through multiple naturalistic behaviors.

The implications of this breakthrough cascade upward at an astonishing rate. If a fruit fly brain can successfully close the sensorimotor loop in a physics simulation, the next steps are no longer a matter of kind, but of scale. Eon Systems is now setting its sights on producing the world’s largest connectome to date: a complete digital emulation of a mouse brain.

A mouse brain contains roughly 70 million neurons—560 times the count of a fruit fly. To achieve this, the team is currently amassing unprecedented amounts of connectomic and functional data. They are combining expansion microscopy to map every neural connection with tens of thousands of hours of calcium and voltage imaging to capture exactly how those networks activate in living tissue. This mammoth undertaking lays the critical groundwork for eventual human-scale emulation.

When you watch a simulation driven by this technology, you are not watching an animation, nor are you watching an algorithm trained to walk. You are watching a biologically derived template, wired neuron-to-neuron from electron microscopy data, experiencing its environment and moving its body.