A groundbreaking collaboration Anthropic with Mozilla proves that AI can autonomously detect and patch high-severity software vulnerabilities at unprecedented speeds—but the clock is ticking for defenders.

- Accelerated Detection: AI models can now independently discover high-severity zero-day vulnerabilities in complex, well-tested codebases like Firefox, finding 22 bugs in just two weeks.

- The Defense Advantage: Currently, AI is significantly better and cheaper at identifying and patching security flaws than it is at actively exploiting them, though this gap is expected to close rapidly.

- New Workflows: Successful AI-driven cybersecurity relies on “task verifiers” to ensure automated patches work correctly, alongside a new blueprint for collaborating with overwhelmed human maintainers.

In the ever-escalating arms race of cybersecurity, defensive strategies often struggle to keep pace with the sheer volume of software vulnerabilities. However, recent developments indicate a massive shift in how the tech industry will secure its digital infrastructure. As AI models evolve into world-class vulnerability researchers, they are proving capable of independently identifying high-severity zero-day flaws in highly complex, heavily scrutinized open-source software.

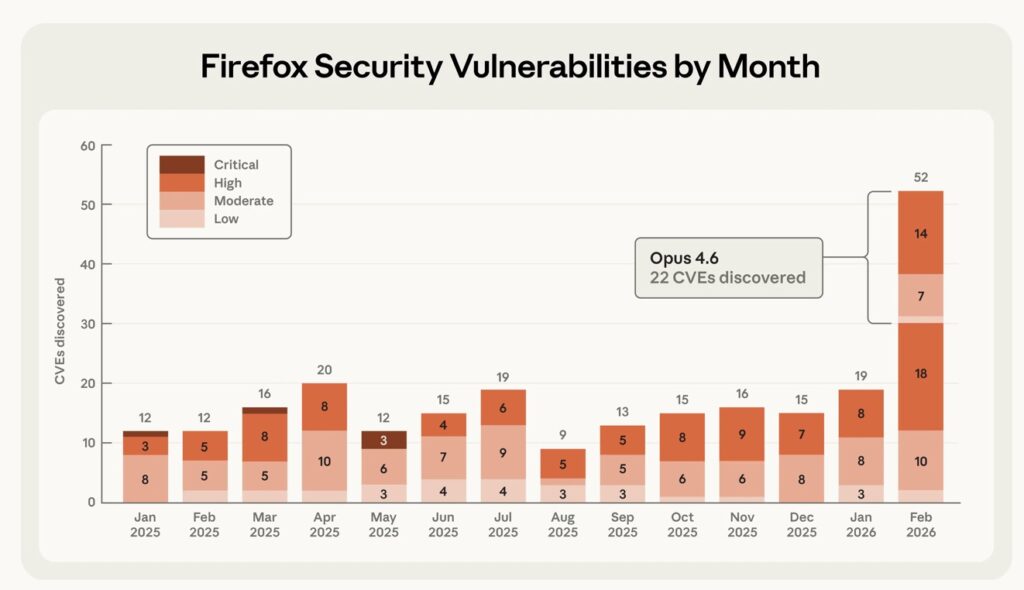

A recent collaboration involving the AI model Claude Opus 4.6 and researchers at Mozilla perfectly illustrates this paradigm shift. Over the course of just two weeks, the AI discovered 22 vulnerabilities in the Firefox browser. Notably, Mozilla classified 14 of these as high-severity—representing nearly a fifth of all high-severity Firefox vulnerabilities remediated in 2025. This partnership resulted in crucial fixes shipped to hundreds of millions of users in Firefox 148.0 and provides a compelling blueprint for the future of AI-enabled cybersecurity.

From Benchmarks to the Real World

The journey to discovering these flaws began with a transition from artificial testing environments to real-world applications. By late 2025, it became clear that advanced models were easily solving benchmark tests like CyberGym. To establish a more rigorous and realistic evaluation, researchers turned their attention to the Mozilla Firefox codebase.

Firefox was selected because it represents one of the most thoroughly tested and secure open-source projects globally. Securing a browser is exceptionally difficult; users constantly encounter untrusted content, making the browser’s defense mechanisms critical. After verifying that the AI could successfully identify historical common vulnerabilities and exposures (CVEs) in older versions of Firefox, the ultimate test was unleashed: finding entirely novel, previously unreported bugs in the current build.

The initial focus was Firefox’s JavaScript engine—a distinct, highly critical component with a massive attack surface. The results were immediate. Within just twenty minutes of exploration, the AI identified a “Use After Free” vulnerability, a dangerous memory flaw that allows attackers to overwrite data with malicious code.

Scaling Up the Search

Validating and submitting that first bug to Bugzilla, Mozilla’s issue tracker, was just the beginning. The AI simultaneously drafted a proposed patch to help human triage efforts. In the brief time it took researchers to validate that initial submission, the model had already found 50 more unique crashing inputs.

This rapid influx of data prompted direct engagement from Mozilla. Recognizing the potential of this automated approach, Mozilla encouraged the researchers to submit their findings in bulk. The operation scaled massively, scanning nearly 6,000 C++ files and resulting in 112 unique reports. The vast majority of the confirmed issues were patched in Firefox 148, highlighting a successful, transparent collaboration that helped researchers tune their AI to generate only the high-signal reports that maintainers actually needed.

The Exploit Gap

To truly understand the cybersecurity threat landscape, it is vital to measure an AI’s offensive capabilities alongside its defensive ones. Researchers developed a rigorous evaluation to see if the AI could not just find these bugs, but actually exploit them to read and write local files on a target system.

After several hundred attempts and approximately $4,000 in API credits, the model successfully turned a vulnerability into a working exploit in only two cases. Furthermore, these exploits were “crude”—they only functioned in a testing environment where crucial defensive layers, like the browser sandbox, had been intentionally disabled.

This reveals a critical reality: identifying vulnerabilities is currently an order of magnitude cheaper and easier for AI than creating functional exploits. While the fact that an AI could generate any working exploit is concerning, defenders currently hold a distinct advantage.

Equipping the Defenders of Tomorrow

To maintain this defensive edge, the software industry must adopt new best practices for AI-enabled security workflows. As models begin acting as “patching agents”—developing and validating bug fixes—they require reliable oversight mechanisms.

The most effective method discovered during this research is the use of “task verifiers.” These are trusted, automated tools that give the AI real-time feedback on its work. A successful task verifier must confirm two distinct things:

- The specific security vulnerability has been completely removed.

- The intended functionality of the program remains entirely intact, without introducing regressions.

When these verified, AI-generated patches are submitted to open-source maintainers—who are often severely overwhelmed by their workloads—they must be packaged effectively. The Firefox team noted that successful, trustworthy AI bug submissions should always include:

- Accompanying minimal test cases.

- Detailed proofs-of-concept.

- Candidate patches.

The Urgency of the Moment

Frontier language models have officially arrived as formidable vulnerability researchers, extending their reach beyond web browsers to fundamental infrastructure like the Linux kernel. With tools like Claude Code Security entering limited research previews, these advanced discovery and patching capabilities are making their way directly into the hands of developers and maintainers.

The tech community cannot afford to be complacent. The current gap between an AI’s ability to discover a bug and its ability to exploit it is unlikely to last. If and when future models break through the exploitation barrier, robust safeguards will be necessary to prevent malicious misuse. Developers and organizations must use this current window of opportunity to redouble their defensive efforts, integrate AI into their security pipelines, and secure their software before the offensive capabilities catch up.