A proposed General Resolution sparked a complex debate on LLMs, ethics, and open-source philosophy—proving the community isn’t quite ready to lay down the law.

- A Paused Policy: A draft General Resolution (GR) to establish rules for AI-assisted contributions in Debian was recently proposed but ultimately shelved, leaving the project to rely on existing policies for now.

- The Terminology Trap: Developers clashed over how to even define the technology, struggling to separate the marketing buzzword of “AI” from the specific realities of Large Language Models (LLMs).

- Community and Ethics: The debate expanded far beyond code quality, touching on the ethical implications of AI training data, copyright concerns, and the risk of stunting the growth of junior developers.

The open-source community is no stranger to philosophical debates, and the integration of artificial intelligence into software development is proving to be its latest crucible. Debian, one of the oldest and most respected Linux distributions, recently found itself wrestling with the question of whether—and how—to accept AI-generated contributions.

The spark was lit in mid-February when Debian developer Lucas Nussbaum introduced a draft General Resolution (GR) to clarify the project’s stance. While the resolution ultimately subsided without a formal vote, the ensuing conversation illuminated the profound complexities open-source projects face as generative AI becomes mainstream.

The Proposal: Safeguards and Disclosure

Nussbaum’s initial draft didn’t seek to ban AI tools; rather, it aimed to regulate them. Under his proposal, AI-assisted contributions (whether partially or fully generated by an LLM) would be welcomed, provided they met strict conditions. Contributors would need to explicitly disclose when a significant portion of their work was unedited AI output, using tags like [AI-Generated].

Crucially, the human submitter would bear the ultimate responsibility. They would be required to fully understand the code and vouch for its technical merit, security, and license compliance. Furthermore, the GR strictly prohibited feeding sensitive or non-public Debian information into generative AI tools.

The Terminology Trap

Before Debian could decide what to do about AI, developers realized they couldn’t even agree on what “AI” meant.

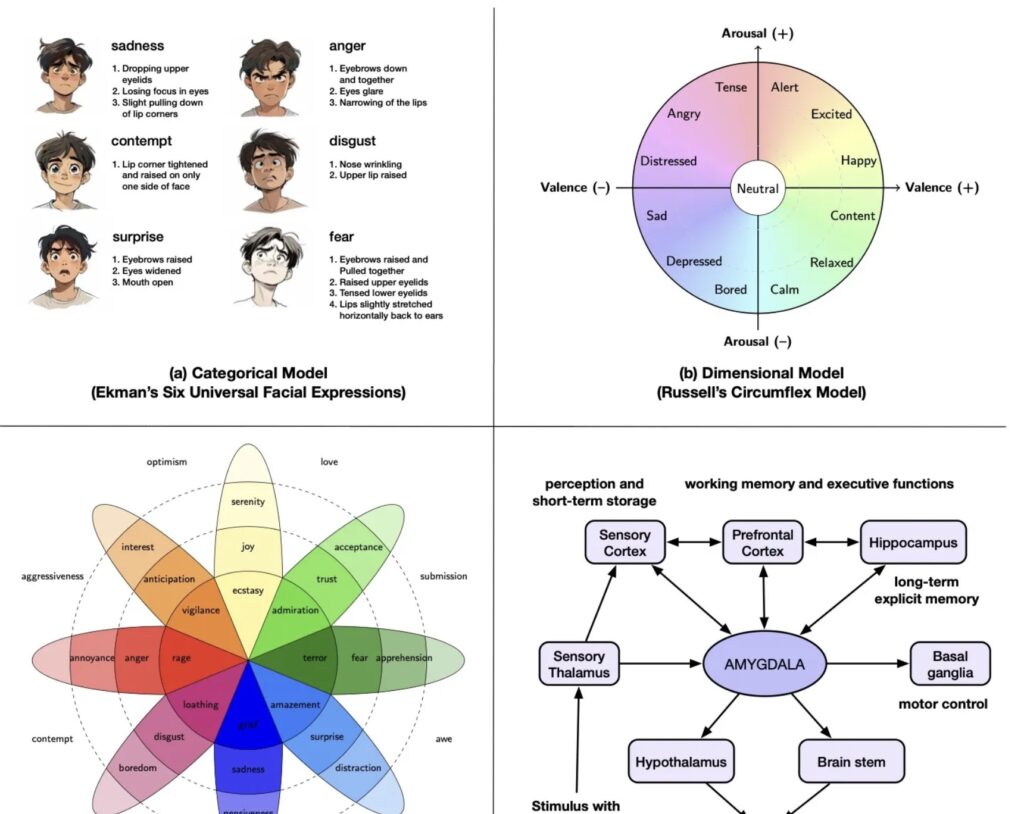

Russ Allbery pointed out that “AI” has become a sloppy marketing catch-all, comparing the attempt to pin it down to “trying to nail Jell-O to a tree.” He argued that durable policy requires specific terminology, noting the vast difference between Large Language Models (LLMs) and techniques like reinforcement learning. Sean Whitton and Andrea Pappacoda echoed this, urging the project to set clear boundaries and focus specifically on LLMs to avoid alienating contributors with overly broad bans.

Nussbaum pushed back, comparing automated code generation to historical debates over proprietary security tools or the use of BitKeeper in the early days of Linux. To him, the specific technology mattered less than the project’s overall stance on automated tooling.

The Human Cost: Onboarding and Community

Perhaps the most fascinating aspect of the debate was its focus on community dynamics. Simon Richter voiced a critical concern: the “onboarding problem.” In a healthy open-source ecosystem, guiding a junior developer through basic tasks results in a transfer of knowledge and a capable new community member. If an AI agent completes those same basic tasks, the problem is solved, but no human learns anything.

Richter worried that accepting AI-driven “drive-by” contributions could disrupt the pipeline of new entrants, turning human maintainers into mere proxies between AI tools and the codebase. Conversely, Ted Ts’o argued that gatekeeping contributors who use AI would be self-defeating, and Nussbaum suggested that AI tools might actually make complex tasks more accessible to newcomers.

Ethics, Copyright, and “Slop”

The ethical and legal dimensions of LLMs were heavily scrutinized. Matthew Vernon highlighted the questionable practices of companies developing tools like ChatGPT and Claude, accusing them of systematically scraping intellectual property without regard for licensing or copyright. He, alongside others, pointed out environmental concerns and the real-world harm of generative AI, arguing Debian should take a firm stance against it.

On the extreme end, Thorsten Glaser suggested that upstream projects containing AI-generated code should be forced out of Debian’s main archive. However, Ansgar Burchardt quickly pointed out the fatal flaw in this hardline approach: it would effectively ban essential projects like the Linux kernel and Python.

Interestingly, the actual quality of AI code was a secondary concern for some. As Allbery wryly noted, while people complain about AI-generated “slop,” humans are perfectly capable of writing terrible code, too: “Writing meaningless slop requires no creativity; writing really bad code requires human ingenuity.”

What is the Source?

The debate also surfaced a novel technical question: if code is generated by an LLM, what is the preferred form of modification? Nussbaum suggested it would be the text prompt used to generate the code. However, because LLMs are non-deterministic and models change frequently, a prompt that generates working code today might generate entirely different code tomorrow, complicating the traditional open-source concept of reproducible source code.

Not Quite Ready

Nussbaum recognized that Debian isn’t ready for a project-wide mandate. The developers lack a shared definition of AI, let alone a consensus on how to regulate it. Because the mailing list discussions remained civil and productive, Nussbaum opted to step back, predicting that any future winning policy would need to be highly nuanced.

For now, Debian will continue to handle AI models, upstream AI-assisted code, and LLM-generated contributions on a case-by-case basis using existing project guidelines. In an era where AI technology evolves by the week, taking a measured, wait-and-see approach might just be the most sensible decision Debian could make.