Multipurpose Backbone for a Wide Range of Computer Vision Tasks Without Fine-tuning

Key Points:

- Meta AI announces DINOv2, a self-supervised vision transformer model for various computer vision tasks.

- Requires no fine-tuning and can learn features directly from images without text descriptions.

- Pretrained version of DINOv2 already competes with CLIP and OpenCLIP on multiple tasks.

Meta AI has just announced the game-changing DINOv2, a self-supervised vision transformer model that can be utilized as a backbone for almost all computer vision tasks without the need for fine-tuning. This innovative model eliminates the requirement for large amounts of labeled data when training computer vision models, making it more accessible and efficient.

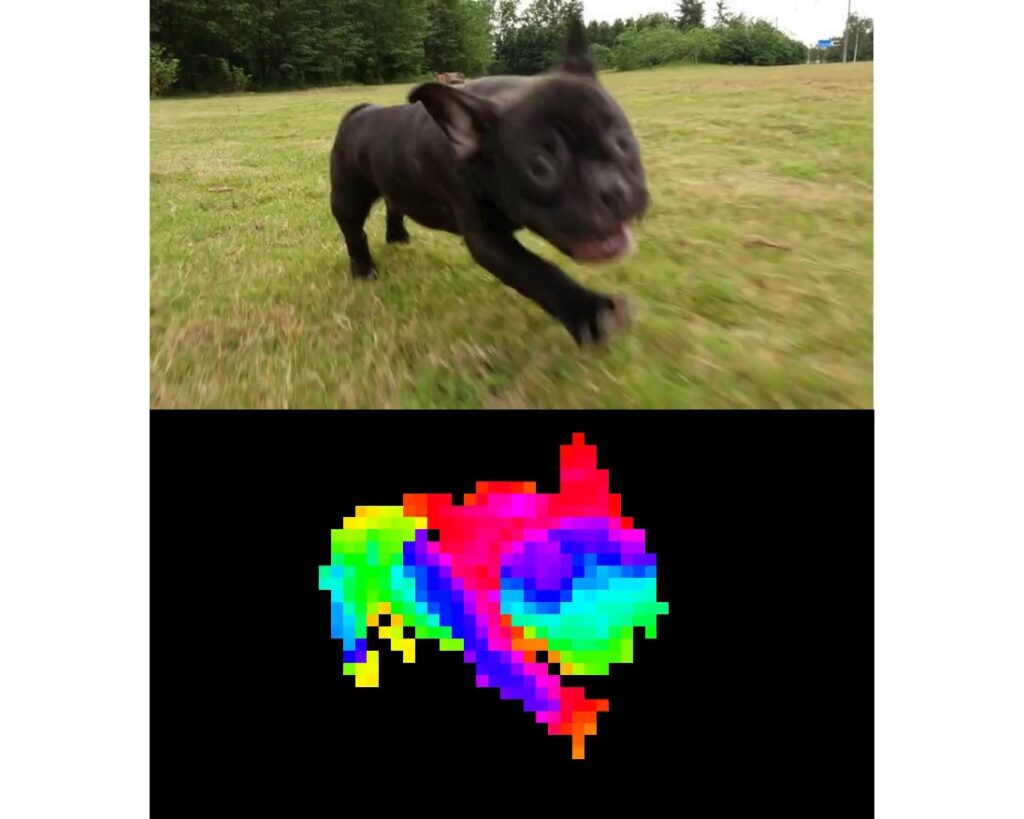

DINOv2 offers a multipurpose backbone for a variety of tasks, including image classification, segmentation, image retrieval, and depth estimation. By learning features directly from images without relying on text descriptions, the model can better understand local information, leading to improved performance.

The model can learn from any collection of images and has been pretrained on a dataset of 142 million images without using labels or annotations. This makes DINOv2 highly competitive with other models such as CLIP and OpenCLIP in a wide array of tasks.

DINOv2’s high-performance visual features can be directly employed with classifiers as simple as linear layers, offering robust performance across domains without the need for fine-tuning. This improvement over the previous state of the art in self-supervised learning (SSL) has brought DINOv2’s performance in line with weakly-supervised features (WSL).

With state-of-the-art results in depth estimation, competitive results in semantic segmentation, and the ability to directly use frozen features for instance retrieval, DINOv2 is set to transform the computer vision landscape. Its diverse applications and strong out-of-distribution performance make it a groundbreaking development in the field of AI and computer vision.