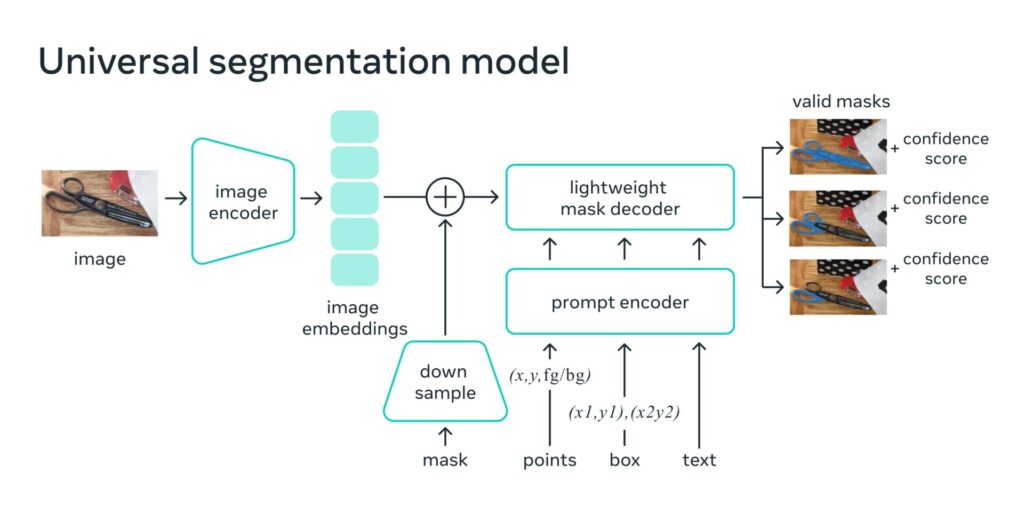

SAM enables one-click segmentation of any object from any photo or video, demonstrating zero-shot transfer capabilities to other segmentation tasks.

Meta AI has announced the release of the Segment Anything Model (SAM), a groundbreaking foundation model that aims to democratize image segmentation across various fields. This versatile and promptable model can adapt to specific tasks, simplifying the segmentation process and reducing the need for specialized expertise and resources.

SAM enables one-click segmentation of any object from any photo or video, demonstrating zero-shot transfer capabilities to other segmentation tasks. The technology holds immense potential for a wide array of applications, including scientific image analysis, photo editing, and multimodal understanding of the world.

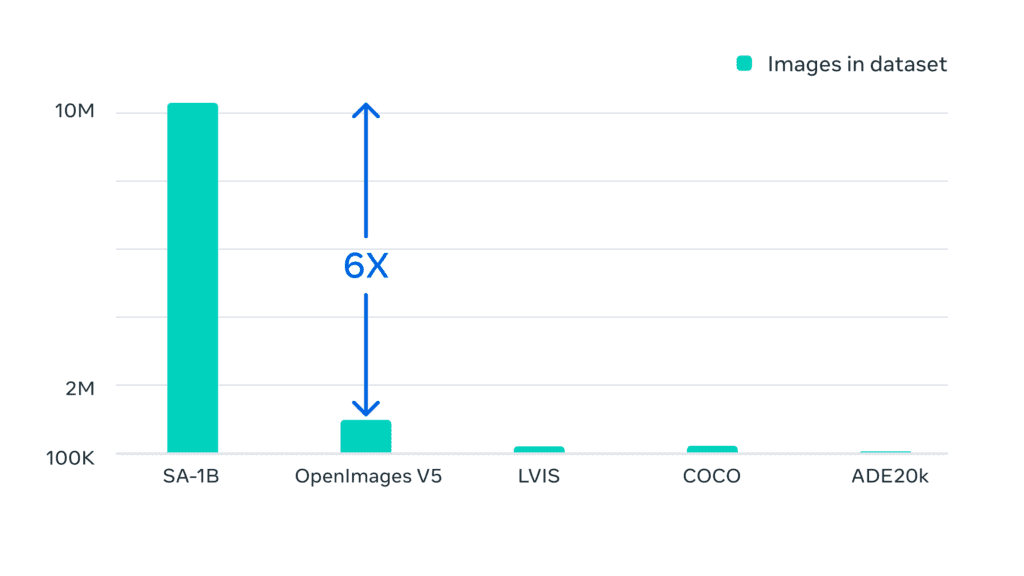

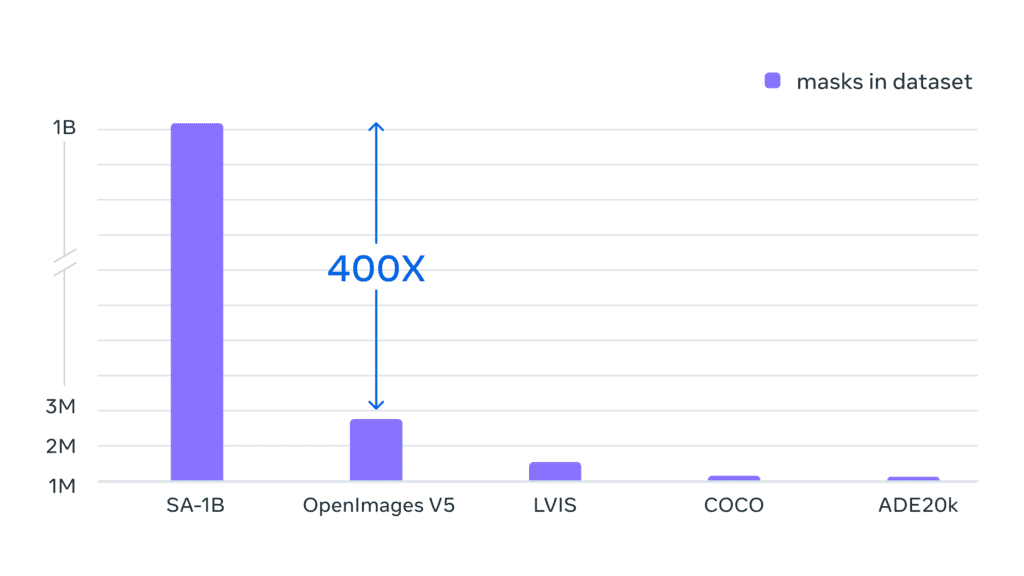

The Segment Anything project introduces not only the SAM but also the largest-ever segmentation dataset, the Segment Anything 1-Billion mask dataset (SA-1B). This dataset will be made available for research purposes, while the SAM will be available under a permissive open license (Apache 2.0).

SAM has been designed to generate masks for any object in any image or video, even those it has not encountered during training. This generalizability makes it suitable for a diverse range of use cases and allows it to be used in new image “domains” without additional training.

The potential applications of SAM are vast, from aiding in the study of natural occurrences on Earth and in space to improving creative applications such as collages and video editing. The model can also be integrated into larger AI systems for more comprehensive multimodal understanding, such as analyzing the visual and text content of a webpage.

As Meta AI continues to develop SAM, the possibilities for this revolutionary image segmentation model are expected to expand, unlocking a myriad of use cases yet to be discovered.