Harnessing the “Noise” of the Universe to Generate Data with Unprecedented Energy Savings

- A Radical Energy Shift: Unlike traditional computers that fight against thermal noise, thermodynamic computers leverage these natural energy fluctuations to perform tasks with orders of magnitude less power.

- Physics-Inspired Generative AI: By mimicking the way neural networks handle data, researchers have successfully generated images (such as numerical digits) from random noise using thermodynamic principles.

- Beyond the “Black Box”: This approach moves away from opaque “black-box” AI models toward a transparent, physics-based understanding of the learning process, offering a scalable alternative to power-hungry hardware.

For decades, the goal of computing has been silence. Engineers have spent billions ensuring that the tiny “flips” of bits—the 1s and 0s that underpin our digital world—are powerful enough to ignore the “jiggling” of atoms and thermal fluctuations known as noise. In the world of modern Artificial Intelligence, this approach is effective but incredibly “expensive” in terms of electricity. However, a groundbreaking study published in Physical Review Letters suggests that the future of AI might not involve fighting the noise, but surfing it.

The Ocean Liner vs. The Surfer

Stephen Whitelam, a scientist at the Lawrence Berkeley National Laboratory, likens conventional computing to an ocean liner. It is massive, powerful, and plows through waves without regard for the water’s movement. It gets the job done, but the fuel cost is enormous. As we attempt to shrink the energy footprint of AI, we eventually reach a point where the “engine” (the electrical signal) is no stronger than the “waves” (thermal noise).

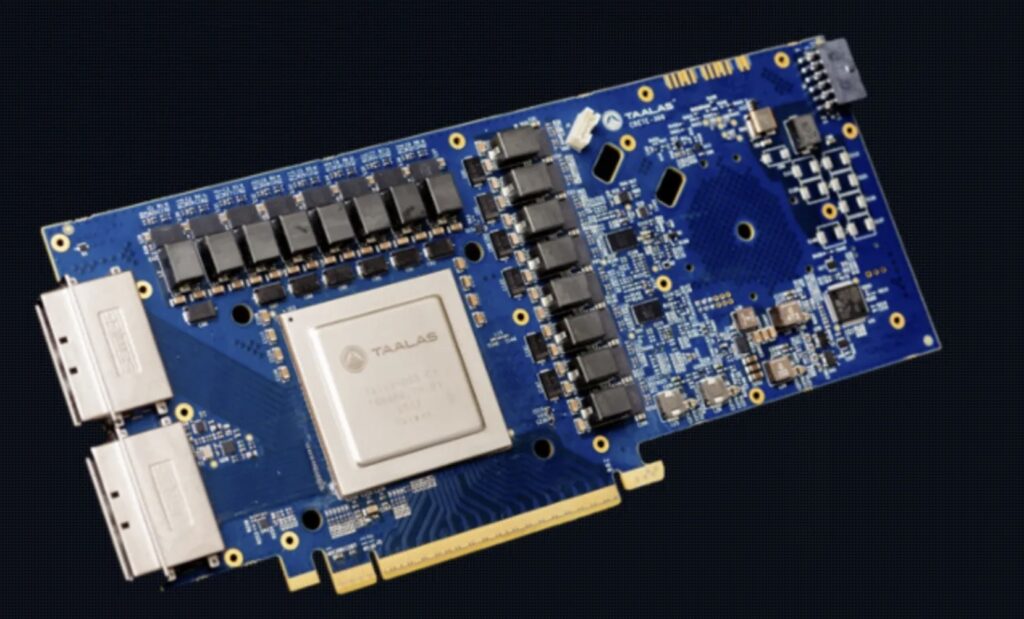

At this scale, a traditional computer is like a dinghy lost at sea. But a thermodynamic computer is more like a surfer. Instead of fighting the environment, it uses the inherent energy of thermal fluctuations—the random flips and vibrations that occur in all matter above absolute zero—to power its calculations. By working with the probability of values rather than forced certainties, these systems can complete complex “optimization” tasks with a fraction of the energy required by a standard GPU.

From Chaos to Clarity: Generating Images

The concept isn’t just theoretical. Researchers have already demonstrated that a thermodynamic system can mimic the generative capabilities of neural networks. By using a network of circuits where connections (coupling strengths) can be programmed, scientists can “pose a question” to the system. The random thermal fluctuations then settle into an equilibrium that provides the answer.

Whitelam took this a step further by looking at diffusion models—the math behind popular AI image generators. These models typically work by taking a clear image, adding noise until it’s unrecognizable, and then training an AI to reverse the process. Whitelam realized that because diffusion is a statistical process rooted in thermodynamics, it is “much more natural” to perform this in a system where the noise is already present for free.

By manipulating the Langevin equation—a mathematical principle dating back to 1908—Whitelam was able to calculate the exact coupling strengths needed to reverse the shroud of noise. In simulations, this “thermodynamic” approach successfully reconstructed images of the digits “0,” “1,” and “2” from total randomness.

A Transparent Future for AI

The implications of this research go beyond just saving on the electricity bill. Ramy Shelbaya, CEO of Quantum Dice, notes that this “physics-inspired” approach helps demystify AI. While modern generative AI often operates as a “black box”—where even the creators aren’t entirely sure how the machine reached a specific conclusion—thermodynamic computing provides a clear, fundamental interpretation of the learning process.

While retrieving three simple numbers is a far cry from the high-definition art generated by current AI, the field is in its infancy. If the history of machine learning is any indication, these “rudimentary” steps are the foundation for a massive shift in how we build the brains of the future. By embracing the natural chaos of the universe, we may finally create AI that is as efficient as the biological brains they were meant to mimic.