A Leap Forward in Digital Human Modeling through Advanced Physics and Rendering Techniques

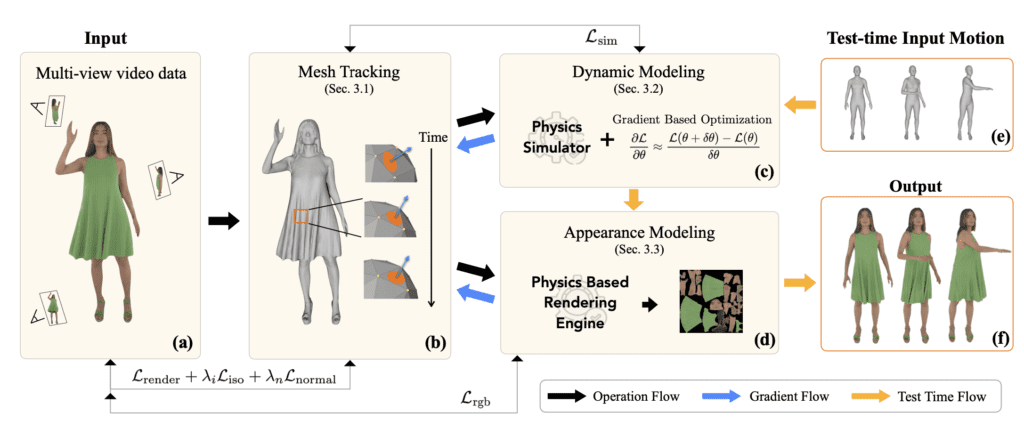

- Introduction of PhysAvatar: A cutting-edge framework that transcends traditional avatar creation by integrating inverse physics and rendering to accurately simulate clothed human figures from visual data.

- Advanced Mesh Tracking and Material Estimation: Utilizing a mesh-aligned 4D Gaussian technique for precise geometry tracking and a physically based inverse renderer for material property estimation, enabling lifelike fabric simulation.

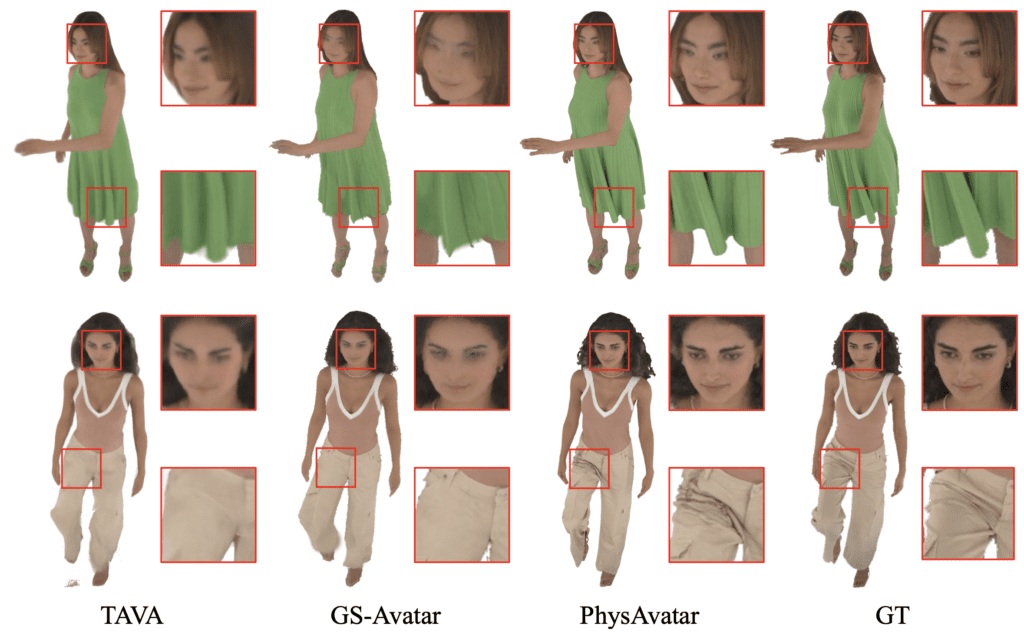

- Realistic Novel-View Renderings: The capability to produce high-quality renderings of avatars in motion, wearing loose-fitting clothes under various lighting conditions, marking a significant stride in photorealistic digital human modeling.

In the realm of digital content creation, the quest for hyper-realistic avatars has long been a formidable challenge, especially when it comes to accurately rendering clothing on animated figures. Enter PhysAvatar, a groundbreaking framework poised to redefine the standards of 3D avatar realism. By marrying the complexities of inverse rendering with the precision of inverse physics, PhysAvatar offers an unprecedented ability to craft photorealistic digital humans from multi-view video data.

At the heart of PhysAvatar’s innovation lies its sophisticated mesh tracking methodology, which leverages a mesh-aligned 4D Gaussian technique. This approach ensures the capture of precise geometric correspondences critical for the accurate estimation of physical parameters of clothing. Unlike traditional methods that often struggle with the dynamic nature of fabric, PhysAvatar’s technique maintains the integrity of the mesh topology, thereby avoiding unrealistic distortions and preserving the natural flow and drape of garments.

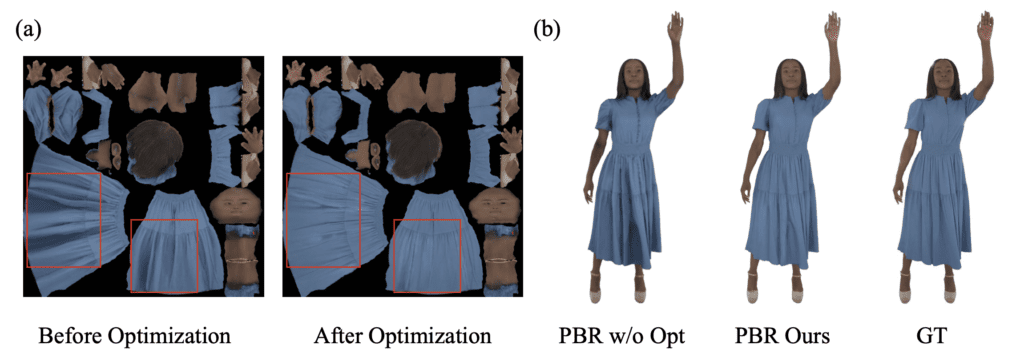

PhysAvatar’s prowess extends to its inverse rendering capabilities, where it excels in estimating the intrinsic material properties of fabrics. This step is crucial for simulating how different materials interact with light and movement, a key aspect in achieving lifelike renderings. The integration of a physics simulator further enhances this process, enabling the framework to deduce the physical parameters of garments through gradient-based optimization, thus ensuring that the avatars’ clothing behaves in accordance with real-world physics.

One of the most compelling features of PhysAvatar is its ability to generate novel-view renderings of avatars. These renderings can depict avatars dressed in loose-fitting clothes, performing a wide array of motions under various lighting conditions that were not present in the initial training data. This capability represents a significant leap towards the creation of digital humans that are not only visually indistinguishable from real individuals but also interact with their environment in a physically plausible manner.

An ablation study within the PhysAvatar framework has underscored the importance of specific loss terms during the mesh tracking process, particularly the ISO loss, which is instrumental in preserving local mesh topology. This attention to detail in maintaining edge lengths and preventing distortions is what sets PhysAvatar apart, ensuring that the avatar’s clothing remains consistent and realistic even during complex movements.

PhysAvatar’s introduction marks a pivotal moment in the evolution of digital human modeling. By effectively capturing the nuanced interplay between light, motion, and fabric, PhysAvatar paves the way for a future where digital avatars can seamlessly blend into real-world environments, enhancing applications in entertainment, virtual reality, and beyond. As we stand on the brink of this new era, the potential applications of PhysAvatar’s technology are as vast and varied as the digital worlds we are only beginning to explore.