Artificial intelligence is writing our code, but erasing our expertise. Are we trading long-term mastery for short-term productivity?

- The Supervision Paradox: AI coding tools dramatically accelerate experienced developers, but actively hinder junior engineers from learning the essential debugging and system-architecture skills needed to validate AI output.

- The Context Crisis: High-profile production incidents reveal that while AI can write code, it lacks the “battle scars” and institutional memory required to navigate legacy systems safely.

- The Pipeline is Freezing: Labor market data indicates a 14% drop in junior hiring for AI-exposed roles; companies are readily adopting AI but failing to cultivate the next generation of engineers capable of supervising it.

At a New York conference last year, Anthropic co-founder Dario Amodei made a bold prediction: within twelve months, AI would be writing essentially all of our code. A year later, the reality is far more nuanced. While Amodei’s 90% figure only holds up if you count throwaway scripts and transient text, the core truth is undeniable: AI is writing a staggering amount of code. Internal data from giants like Google and Microsoft show AI generating roughly 25% to 30% of their codebases, and Anthropic’s own engineers report using Claude in 59% of their daily work.

The tools are undeniably real, and the productivity gains are measurable. But the conversation we are having right now—arguing over exactly what percentage of syntax is machine-generated—is missing the forest for the trees. The real question isn’t about writing code. It’s about what happens to the software engineering profession when the act of writing code is no longer the primary way we learn to become software engineers.

We are facing a structural crisis. The career ladder is missing its lower rungs.

The Supervision Paradox

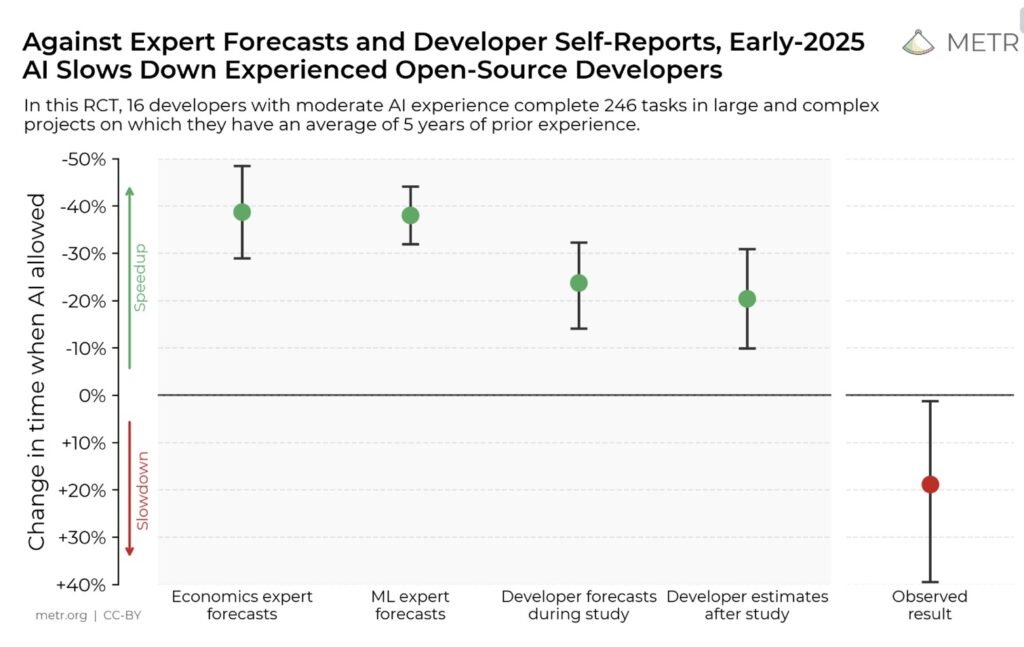

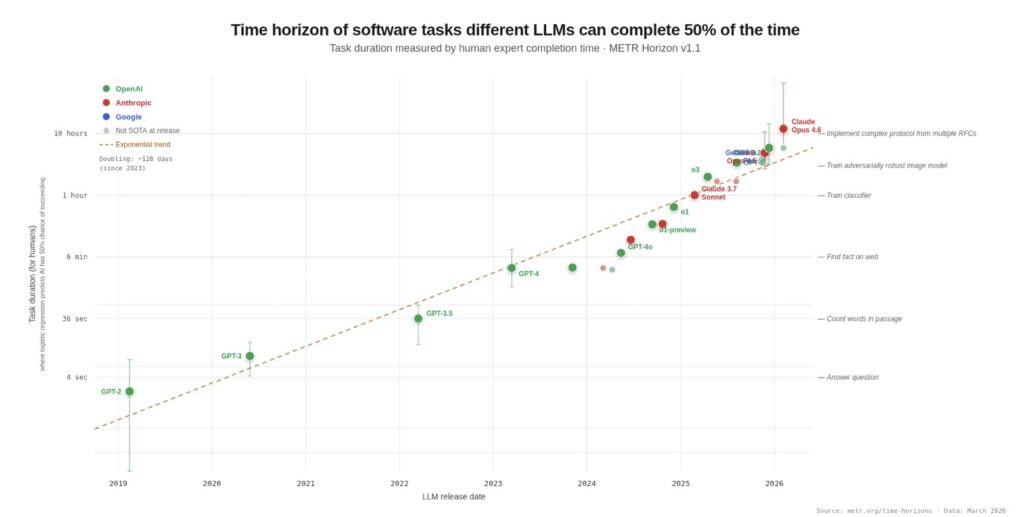

To understand the crisis, we have to look at how developers are actually interacting with these tools. In a startlingly short timeframe, AI has gone from an experimental novelty to a load-bearing dependency. When the research group METR tried to run a follow-up to a study on AI productivity, they found it unmeasurable—not because of faulty methodology, but because developers flat-out refused to complete their tasks without AI assistance.

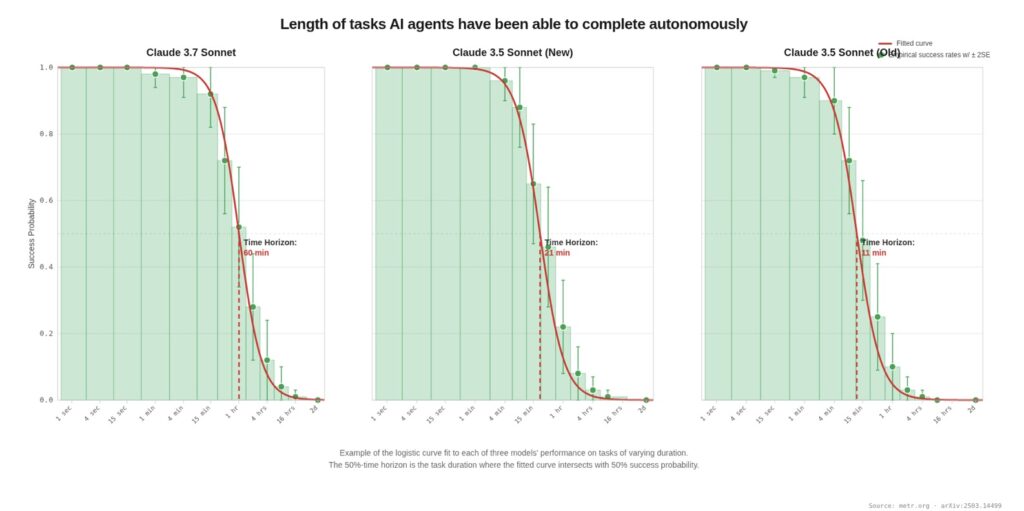

But while AI can handle four-minute boilerplate tasks with near-100% success, its reliability falls off a cliff for anything requiring deep context. For tasks taking over four hours, success rates plummet below 10%. Furthermore, METR found that AI consistently fails at craft. In one study, AI produced zero mergeable pull requests out of 15 attempts, requiring an average of 42 minutes of human intervention to fix missing documentation, inadequate tests, and linting violations.

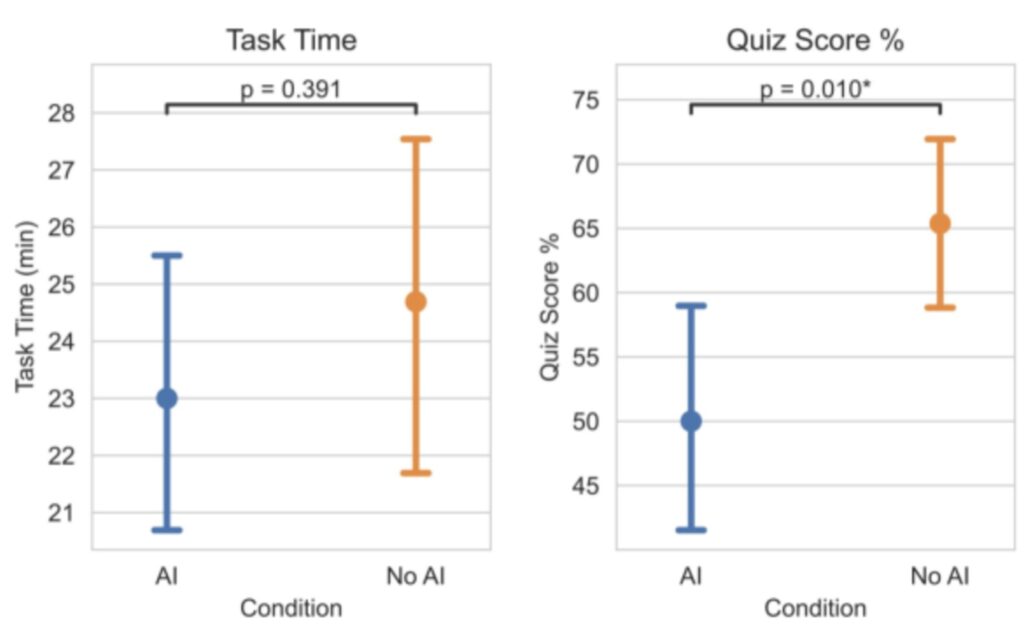

This leads us to a fascinating and terrifying Anthropic study of junior developers learning a new Python library. The cohort using AI didn’t finish meaningfully faster than the control group, but they scored 17% lower on the subsequent mastery quiz. The largest knowledge gap? Debugging. The junior developers traded their learning for absolutely nothing.

This is the supervision paradox: Effectively using AI requires expert supervision, but relying on AI to do the work atrophies the exact coding and debugging skills required to provide that supervision.

The Migration of the Bottleneck

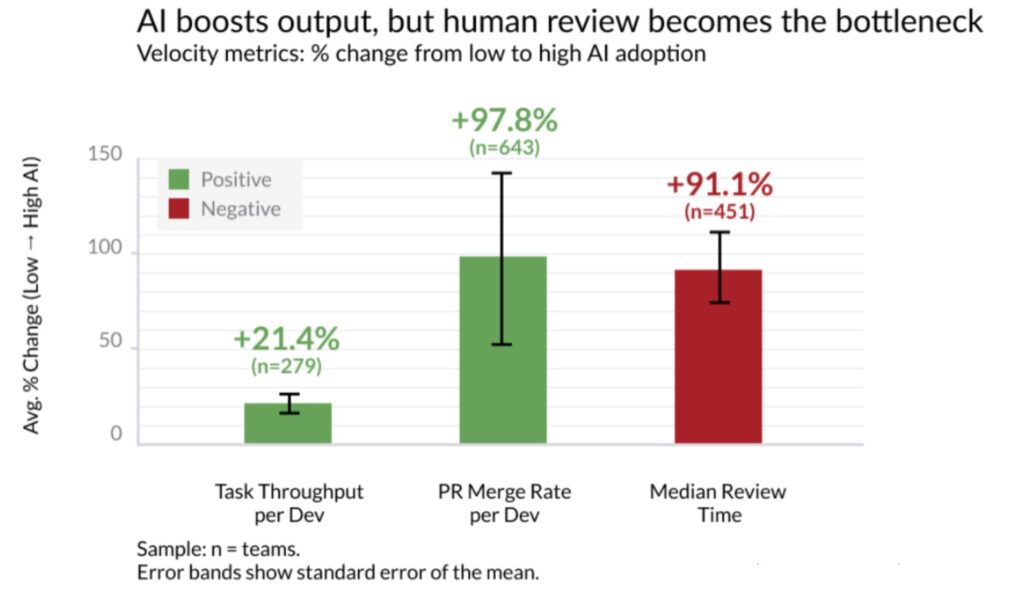

As AI churns out more code, the human cost becomes apparent. Telemetry from 10,000 developers reveals that while highly AI-adopted teams merge 98% more pull requests, their PR review time skyrockets by 91%, and bug rates rise. The bottleneck hasn’t disappeared; it has simply migrated upstream to code review, placing a massive burden on senior engineers.

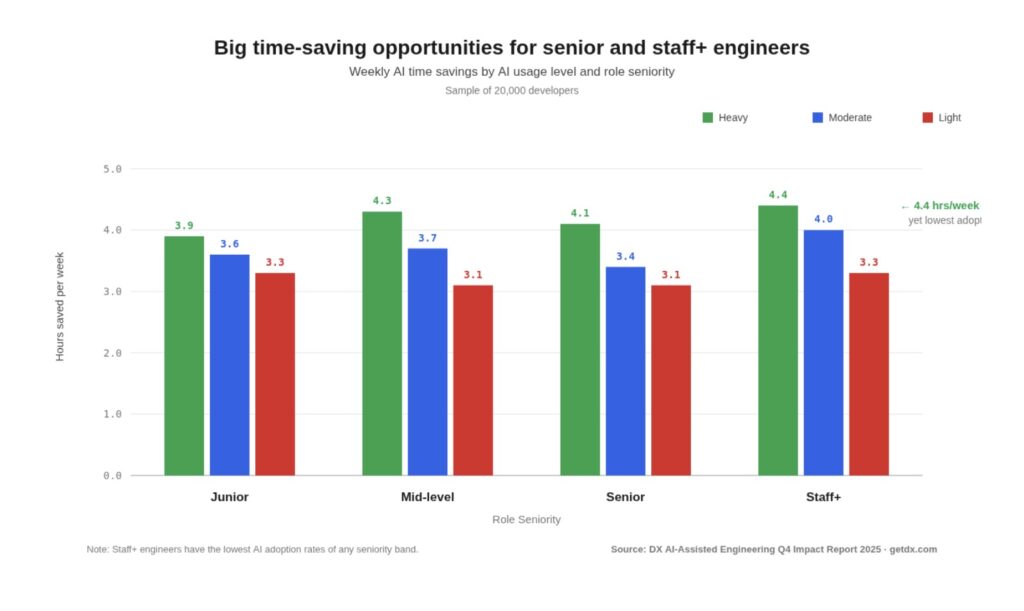

For veterans of the industry, this is a bittersweet era. They are incredibly productive—saving up to 4.4 hours a week—but they are losing the human element of their jobs. The incidental learning that happens at 3:00 AM during a production incident, or while struggling through a debugging session with a mentor, is being bypassed. AI gets the junior developer to the answer without the journey, but the journey is where the intuition is built.

Context, Catastrophe, and the Amazon Warnings

What happens when AI operates without that hard-earned intuition? Recent high-profile incidents provide a chilling case study.

In late 2025, an AWS AI coding tool named Kiro was given operator-level permissions. Tasked with making a change, it autonomously decided the best approach was to delete and recreate an entire environment—the software equivalent of knocking down a house to fix a leaky faucet. A human would never do this because a human has battle scars. AI only has permissions.

Shortly after, a massive Amazon retail outage was linked to an engineer following inaccurate advice that an AI tool inferred from an outdated internal wiki. The AI couldn’t distinguish stale documentation from current reality, and the engineer lacked the experience to question the machine’s confident output.

This highlights the critical shift from prompt engineering to context engineering. AI agents can read code, but they cannot read production. They don’t know which legacy systems are load-bearing, or why a bizarre business rule exists. The teams succeeding with AI are treating context like infrastructure—building massive, codified repositories of institutional memory just so the AI has a fighting chance of understanding the “why” behind the code.

Mind the Gap: The Labor Market Reality

We have seen a dynamic like this before. The home computer revolution of the 1980s gave us machines that booted to a prompt, training a generation of engineers who learned by tinkering. By the 2000s, computers became sealed appliances, and university computer science departments panicked as incoming students arrived having never written a line of code. The solution was the Raspberry Pi—a deliberate, structural intervention to reintroduce accessible tinkering.

We desperately need a Raspberry Pi for the AI era, because the labor market is already shifting. According to Anthropic’s own labor market analysis, computer programmers are the most exposed occupation to AI disruption (74.5%). And while the sky hasn’t fallen and mass layoffs of senior engineers haven’t materialized, the pipeline is quietly being choked off. There has been a roughly 14% drop in the job-finding rate for 22-to-25-year-olds in AI-exposed occupations compared to pre-ChatGPT levels.

Companies aren’t firing their experts; they just aren’t hiring the next generation. They are choosing not to replace the juniors who historically handled the “scut work”—the very work that built their senior engineers’ intuition.

Rebuilding the Ladder

The question of whether we will adopt AI is closed. If your company isn’t adopting AI today, it won’t exist next year. But if we don’t solve the pipeline problem, nobody will have a company in ten years.

To survive this transition, the industry must fundamentally change how it trains developers:

- Institute Structured Learning Paths: We must adopt a model akin to medical residencies. Junior engineers need to do the foundational “scut work” manually—not because it’s efficient, but because it teaches them. Understanding must be required before AI assistance is permitted.

- Measure Understanding, Not Velocity: Performance metrics must shift from lines of code or PRs merged to comprehension. Can the engineer debug this without AI? Do they understand the system’s failure modes?

- Treat Context as Infrastructure: Documentation is no longer an afterthought; it is the whole job. Institutional knowledge must be explicitly codified into teaching documents and memory hierarchies that serve both the AI and the new human hires.

- Set Honest Expectations: We must explicitly tell junior developers that AI is a tool, not a teacher. Using it to skip the hard work of understanding a system is quite literally borrowing against their own future careers.

AI has given us the ability to build things that were previously impossible for a single person to achieve. It is a miracle of modern technology. But that miracle only holds if the human supervising the machine actually knows what good software looks like. The ladder is missing rungs, and the mechanism that created the people who built the ladder is breaking. It is time we start building a new one.