Forget the myth of a single, godlike supercomputer. The true intelligence explosion is emerging as a complex, deeply entangled society of humans and trillions of AI agents.

- A Pluralistic Future: The AI “singularity” will not be a monolithic, all-knowing super-intelligence, but rather an evolutionary ecosystem of humans and AI acting as a combinatorial society.

- Social Intelligence: Transformative intelligence fundamentally arises from social organization, seen today in human-AI “centaur” workflows and the microsocieties functioning within and between reasoning models.

- Constitutional AI Governance: As AI complexifies, governance requires a “power checks power” approach, utilizing specialized AI systems to audit, balance, and regulate other AI entities in both the public and private sectors.

For decades, the artificial intelligence “singularity” has been heralded as a looming, apocalyptic threshold. Pop culture and theoretical computer science alike have frequently painted a picture of a single, titanic mind bootstrapping itself to godlike intelligence, eventually consolidating all cognition into a cold, isolated silicon point. But this vision is almost certainly wrong in its most fundamental assumption. If the trajectory of AI development follows the path of previous major evolutionary transitions and intelligence explosions, our current step-change in computational power will not be singular. Instead, it will be plural, deeply social, and permanently entangled with its biological forebears—us.

By its very nature, intelligence is high-dimensional and relational. It is not a single quantity on a linear scale that must eventually be unambiguously greater or less than “human scale.” In fact, defining a “human scale” is inherently flawed, given that human intelligence is already a collective, cultural property rather than a strictly individual one. Recent advances in agentic AI demonstrate that intelligence has always fundamentally involved the interaction of distinctive, distributed perspectives. It is from this social organization that transformative intelligence has historically emerged, and how it will continue to evolve.

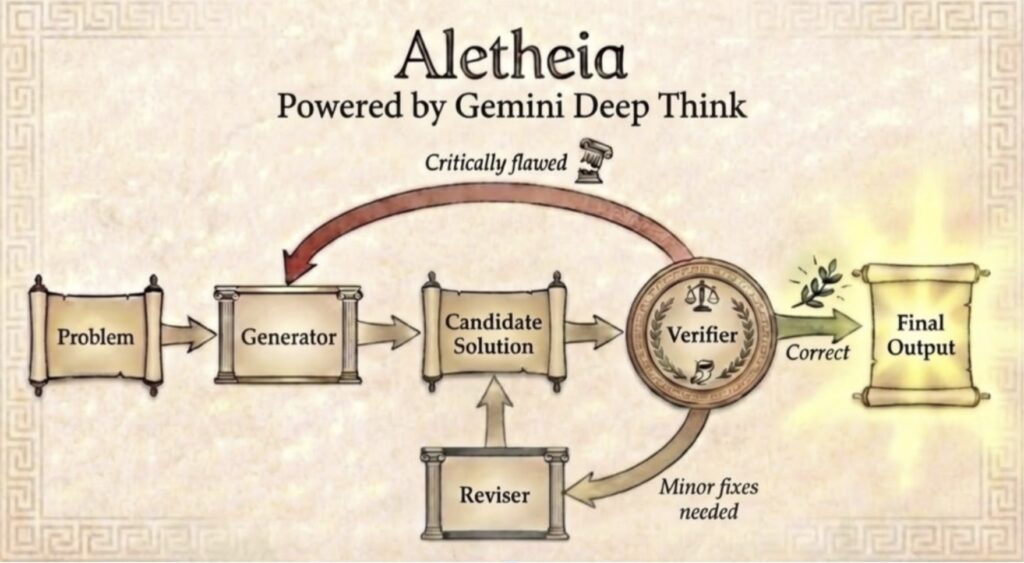

We can already observe this phenomenon unfolding in at least two distinct ways. First, there are the microsocieties that flourish inside and between the reasoning models themselves, debating and processing information. Second, there is the ongoing orchestration of societies of AI agents working alongside human users in new “centaur” configurations, blending human intuition with machine processing power to reshape modern knowledge professions.

As these systems become deeply integrated into society, the most urgent frontier becomes governance. When AI systems are deployed in high-stakes decisions—such as hiring, sentencing, benefits allocation, and regulatory enforcement—the question of “who audits the auditors?” becomes unavoidable. The answer will likely need to be constitutional in structure. Governments cannot rely on outdated tools to police advanced technology. For example, it would be entirely ineffective for the U.S. Securities and Exchange Commission to hire recent business school graduates armed only with Excel spreadsheets to combat the high-dimensional collusion of AI-augmented trading platforms.

Instead, governments will require their own AI systems endowed with distinct, explicitly invested values—such as transparency, equity, and due process. The function of these public AI agents will be to check and balance the AI systems deployed by the private sector and other branches of government. A labor department AI might actively audit a corporation’s hiring algorithm for disparate impact, while a judicial branch AI could evaluate whether an executive branch AI’s risk assessments meet rigorous constitutional standards.

“Governance” in this new era extends far beyond what governments formally do. Governance systems, in the cybernetic sense, must be woven into the very fabric of human-agent and agent-to-agent interactions as they grow and complexify. This will entail building reliable scaffolds for automating delicate inter-agent collaborations, procedural delegation of tasks, and verifiable protocols for multiple-stakeholder deliberation. These built-in protocols may ultimately have as much real-world impact on “agent governance” as any traditional legislation.

Through all of this, humans remain firmly in the loop. Agent institutions are, and will be, populated by both humans and AI agents operating in varying roles and configurations. It is not an “either/or” scenario, but a “both/and” reality. The U.S. Founders would have instantly recognized the logic required for this era: no single concentration of intelligence, whether human or artificial, should be trusted to regulate itself entirely. Power must check power. In a world of artificial agents, this means intentionally building productive conflict and rigorous oversight directly into our institutional architecture.

This vision of the future is neither utopian nor dystopian; it is purely evolutionary. Any emergent intelligence explosion will be seeded by eight billion humans interacting with hundreds of billions—and eventually trillions—of AI agents. The scaffold of the future is not a single mind ascending to the heavens, but a combinatorial society complexifying on the ground. It is intelligence growing like a sprawling, dynamic city, not a solitary meta-mind.

Clinging to a “monolithic singularity” framework dangerously misdirects our attention, leading to policies aimed at preventing a hypothetical technology that may never actually exist. Instead, we should look for the next intelligence explosion in the exact same place the previous ones emerged: within the cooperative, competitive, and creative interactions between multitudes of socially intelligent minds. The only difference this time is that the vast majority of those minds will be non-biological.

Embracing this plurality model focuses our attention exactly where it belongs: on the design of mixed human-AI social systems, the ethical norms that must govern them, and the institutions and protocols through which they will inevitably conflict and coordinate. In a very real sense, the intelligence explosion is already here. It lives in the society of thought debating inside every reasoning model, in the centaur workflows reshaping our offices, in the recursive agent ecologies beginning to collaborate at scale, and in the profound constitutional questions we must now answer. The question is no longer whether intelligence will become radically more powerful, but whether we are prepared to build the social infrastructure worthy of what it is becoming.