Moving beyond static puzzles to measure true, human-like reasoning and continuous learning in Artificial Intelligence.

- A New Standard for Intelligence: ARC-AGI-3 is a groundbreaking interactive benchmark that tests AI agents on their ability to explore novel environments, adapt in real-time, and learn from experience without relying on natural language prompts.

- Focusing on the Learning Process: The benchmark measures how efficiently AI acquires skills, plans for the long term, and updates its world models based on sparse feedback, proving that testing intelligence requires evaluating adaptation across time, not just final answers.

- Equipping Developers for the Future: With a comprehensive developer toolkit, transparent user interface, and detailed replay capabilities, ARC-AGI-3 provides the exact tools needed to evaluate, iterate, and close the gap between artificial and human intelligence.

The pursuit of Artificial General Intelligence (AGI) has long been defined by a fundamental truth: as long as there is a gap between how an AI learns and how a human learns, we do not truly possess AGI. For years, the industry has relied on static tests and rigid datasets to evaluate models. However, true intelligence is not about retrieving memorized facts; it is about entering an unknown situation, understanding the rules on the fly, and adapting to overcome obstacles. This is the exact gap that ARC-AGI-3 is designed to measure. As the first interactive reasoning benchmark created to quantify human-like intelligence in AI agents, it shifts the focus from what an AI knows to how an AI learns.

At its core, ARC-AGI-3 challenges agents to explore completely novel environments and acquire goals dynamically. Instead of solving static puzzles with the help of extensive natural-language instructions, agents must learn purely from experience inside each environment. They are required to perceive what truly matters in their surroundings, select deliberate actions, and build adaptable world models. The ultimate benchmark of success is striking in its clarity: a 100% score means that the AI agent can beat every single game presented to it just as efficiently as a human would.

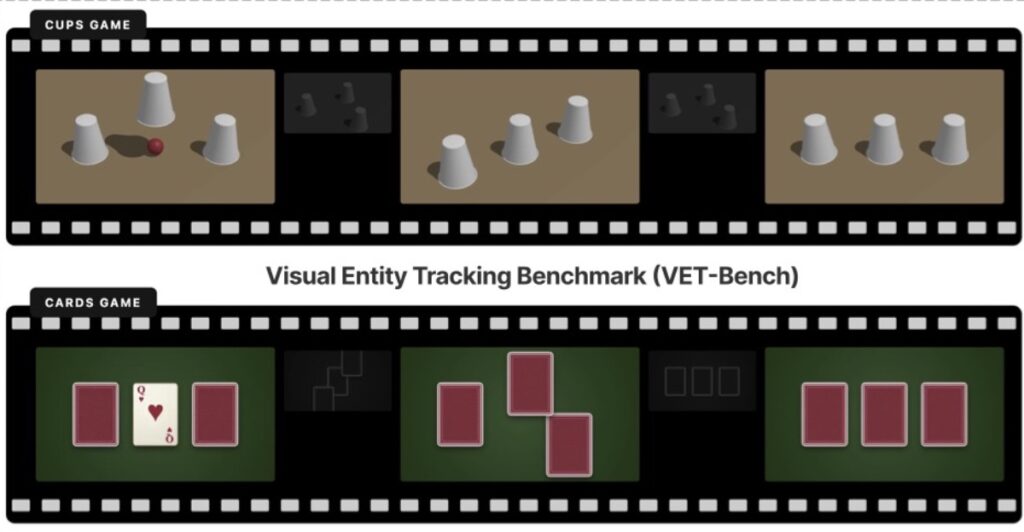

https://neuronad.com/featured/the-ai-shell-game-why-vision-language-models-lose-sight-and-how-to-fix-it/The AI Shell Game: Why Vision-Language Models Lose Sight and How to Fix It

To accurately measure this intelligence, ARC-AGI-3 is built upon a foundation of environments that are 100% human-solvable and remarkably easy for humans to pick up quickly. The benchmark rigorously tests skill-acquisition efficiency over time, experience-driven adaptation across multiple steps, and long-horizon planning in the face of sparse feedback. Because the environments feature strict novelty to prevent brute-force memorization, agents cannot rely on pre-loaded knowledge or hidden prompts. They are given only clear goals and meaningful feedback. By capturing how an agent handles memory compression, determines its planning horizons, and updates its beliefs as new evidence appears, ARC-AGI-3 makes the gap between human and machine intelligence vividly measurable.

Beyond its conceptual brilliance, ARC-AGI-3 is fundamentally a practical platform designed for developers. It features a robust suite of tools, including a comprehensive developer toolkit for seamless agent integration and extensive documentation covering everything from environment details to API usage. Once an agent is integrated, developers can utilize a deeply interactive UI built for transparent evaluation. This interface allows creators to inspect agent behavior through preview replays and replayable runs, making it possible to track every decision, action, and piece of reasoning across a structured timeline. Ultimately, ARC-AGI-3 does not just test AI; it provides the roadmap and the workbench necessary to finally build agents that can think, learn, and adapt just like we do.