Chief Scientist Bill Dally reveals how custom AI models and reinforcement learning are outperforming human intuition and redefining the future of semiconductor engineering.

- Massive Time Savings: NVIDIA’s AI tools have reduced the time needed to port standard cell libraries from 80 person-months to a single overnight run on one GPU.

- Beyond Human Intuition: Reinforcement learning systems are generating novel architectural layouts that improve key performance metrics by 20% to 30%, creating designs human engineers would never naturally conceive.

- Empowering the Workforce: Rather than replacing junior staff, custom internal LLMs are being used to efficiently upskill newer engineers and streamline complex bug-routing workflows.

The semiconductor industry has always been defined by a relentless race against time, where Moore’s Law dictates an ever-accelerating cadence of innovation. Yet, the physical design and architectural mapping of these microscopic marvels remain incredibly labor-intensive. That paradigm is now shifting dramatically. During a recent conversation at the GTC conference with Google Chief Scientist Jeff Dean, NVIDIA Chief Scientist Bill Dally pulled back the curtain on how AI is fundamentally rewiring the way the company builds its world-leading GPUs. By applying artificial intelligence across design exploration, standard cell library work, bug handling, and verification, NVIDIA is not just saving engineering time—it is pushing the boundaries of what is architecturally possible.

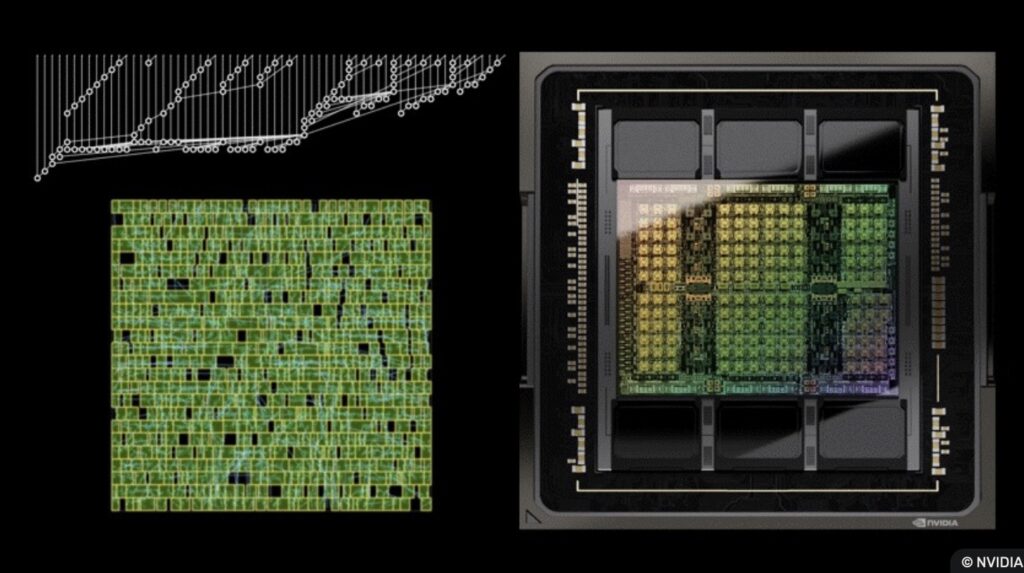

The most striking example of this transformation is an internal reinforcement learning-based tool known as NB-Cell. In chip design, every time a company adopts a new semiconductor manufacturing process, they must port their standard cell library—a painstaking collection of roughly 2,500 to 3,000 distinct cells. Historically, this endeavor required a team of eight engineers working for 10 months, resulting in an 80 person-month bottleneck. Today, Dally notes that NB-Cell (now in its second or third iteration) accomplishes this monumental task overnight using just a single GPU. More importantly, this speed does not come at the cost of quality. The AI-generated cells routinely match or exceed human designs in critical metrics like cell size, power dissipation, and delay. This represents a colossal productivity gain, effectively eliminating a major logistical obstacle to adopting new, cutting-edge manufacturing processes.

NVIDIA’s implementation of AI goes far beyond mere automation and speed; it is actively venturing into the realm of superhuman intuition. Dally highlighted another internal tool, Prefix RL, which is tasked with the highly complex, long-studied problem of placing look-ahead stages within a carry lookahead chain. Rather than mimicking human design patterns, Prefix RL produces highly unconventional layouts that, in Dally’s words, “no human would ever come up with.” These alien designs aren’t just novelties—they yield tangible benefits, improving key metrics by an impressive 20% to 30% compared to traditional human-led designs. This underscores a broader shift in the tech landscape: AI is evolving from a fast worker into a creative collaborator, capable of exploring vast design spaces that fall outside the boundaries of normal human logic.

Beyond the silicon itself, NVIDIA is leveraging AI to optimize its human capital and internal knowledge base. The company has trained proprietary Large Language Models (LLMs), dubbed Chip Nemo and Bug Nemo, on decades of highly confidential internal data, including RTL (Register-Transfer Level) code and historical GPU architecture documents. In an industry where there is growing anxiety about AI replacing entry-level jobs, NVIDIA’s approach offers a refreshing counter-narrative. Junior engineers can now query Chip Nemo to understand how specific, complex architectural blocks function. This effectively gives newer employees an always-available, expert mentor, drastically accelerating their onboarding process while simultaneously freeing senior designers from answering repetitive questions. Meanwhile, Bug Nemo assists in summarizing complex bug reports and intelligently routing them to the correct module or engineer.

While these advancements paint a picture of a radically transformed industry, Dally remains grounded in the reality of the current technology. He was careful to note that a fully automated, end-to-end chip design process is still a long way off. Human ingenuity, oversight, and high-level architectural vision remain irreplaceable. What NVIDIA has achieved, however, is a powerful symbiotic relationship where AI clears away the most tedious roadblocks, discovers hidden efficiencies, and empowers engineers to focus on the next great leap forward in computing.