OpenAI’s CEO pivots to the very gatekeeping tactics he recently mocked, locking a powerful new frontier model behind a velvet rope.

- Exclusive Access: OpenAI is launching GPT-5.5-Cyber, a specialized frontier model for cybersecurity, with a limited rollout restricted exclusively to vetted “critical cyber defenders” and government entities.

- A Reversal in Rhetoric: The gated release closely mimics Anthropic’s restrictive rollout of Claude Mythos—a strategy OpenAI CEO Sam Altman publicly criticized just weeks ago as “selling fear” and building a “$100 million bomb shelter.”

- Proven Lethality: Independent testing by the UK’s AI Security Institute confirms the model’s immense power, noting it as one of the strongest models ever tested and one of the few capable of completing end-to-end multi-step attack simulations.

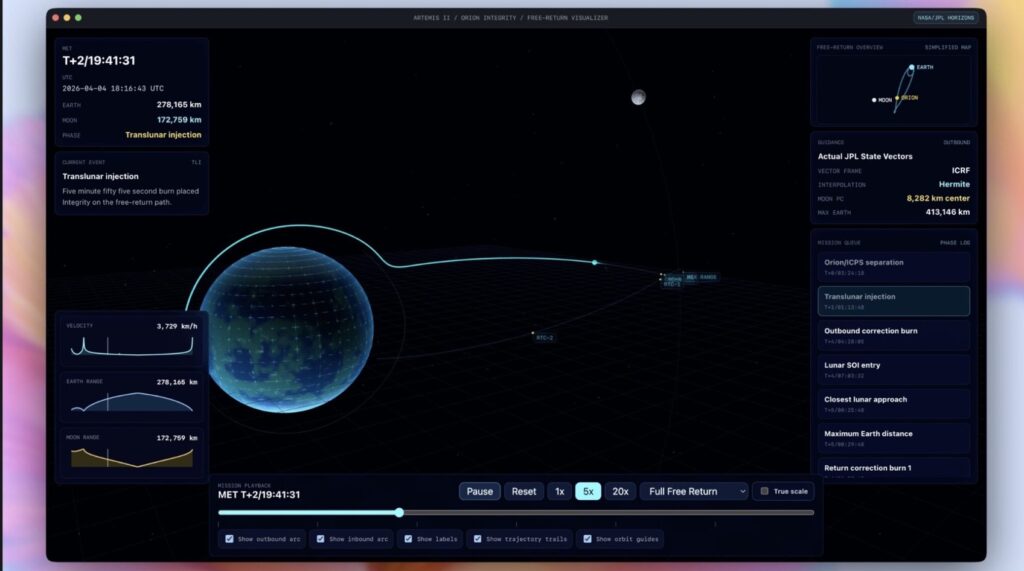

The artificial intelligence arms race has officially entered its cybersecurity era, and the velvet ropes are going up. In a move that highlights the growing dual-use dangers of frontier AI, OpenAI is preparing to release a restricted, highly specialized security model dubbed GPT-5.5-Cyber. According to an announcement from CEO Sam Altman on X, the limited rollout will begin “in the next few days.” The stated mission is urgent and collaborative: “We will work with the entire ecosystem and the government to figure out trusted access for cyber,” Altman wrote, noting the company’s desire to “rapidly help secure companies and infrastructure.”

Yet, OpenAI’s sudden embrace of exclusivity carries a heavy dose of irony. The announcement comes just weeks after rival Anthropic released its own cyber-focused model, Claude Mythos, to a tightly controlled group of roughly 50 organizations. At the time, Altman was notably unimpressed by Anthropic’s caution. Appearing on the Core Memorypodcast, he took a thinly veiled swipe at what he framed as exclusivity masquerading as safety. “There are people in the world who, for a long time, have wanted to keep AI in the hands of a smaller group of people,” he remarked, likening the approach to selling fear. “We have built a bomb, we are about to drop it on your head. We will sell you a bomb shelter for $100 million.” Fast forward to today, and OpenAI is—if not building the exact same shelter—at least checking IDs at the door to get in.

This pivot toward gatekeeping, however, appears justified by the sheer capability of the technology. GPT-5.5-Cyber is engineered to spot critical flaws before malicious actors can exploit them. The model is reportedly capable of penetrating systems, finding bugs, writing exploits, and tearing apart complex malware. This isn’t just marketing fluff; independent validation backs up OpenAI’s claims. The UK’s AI Security Institute (AISI) recently stated that GPT-5.5-Cyber is “one of the strongest models we have tested on our cyber tasks.” Crucially, the AISI noted that it is only the second system they have evaluated that can complete a complex, multi-step attack simulation from start to finish. As cybersecurity professionals know well, tools designed to break systems rarely stay in the right hands for long, prompting this aggressive shift toward access control.

Despite the model’s formidable reputation, OpenAI is keeping its cards close to its chest regarding the underlying technology. We do know that the broader parent model, GPT-5.5—touted for its efficiency and prowess in coding and enterprise workflows—was rolled out to ChatGPT Plus, Pro, Business, and Enterprise tiers earlier in April. However, OpenAI notably delayed an API release for the flagship GPT-5.5 specifically to study its security implications.

For the cybersecurity practitioners on the ground, this specialized variant raises a host of operational questions that go beyond mere model architecture. Early partners will have to navigate complex access control and vetting workflows, establish secure logging and telemetry, and figure out integration points for existing SIEM (Security Information and Event Management) and SOAR (Security Orchestration, Automation, and Response) tools. Since OpenAI has not specified how these access controls will be implemented—whether through API gating, private-cloud deployments, or strict on-premise constraints—these remain open design choices for the industry to solve.

The industry will be watching closely to see exactly who makes it past OpenAI’s velvet rope. Will access be limited strictly to government CERTs and critical-infrastructure operators in energy, finance, and healthcare, or will private security vendors also get a seat at the table? Furthermore, the regulatory response will be telling. The Vergepreviously reported that the White House took a keen interest in the release of Anthropic’s Claude Mythos, and it is highly likely that OpenAI’s GPT-5.5-Cyber will face similar scrutiny. As AI vendors increasingly focus on explicit cyber-defender use cases, the broader industry is being forced to navigate a precarious trade-off: wider availability accelerates innovation, but restricted access is the only current defense against unleashing automated vulnerability discovery and large-scale attacks on the global public.