How a novel “Semantic Progress Function” smooths out abrupt transitions and redefines temporal coherence in artificial intelligence.

- The Pacing Problem: AI video generators frequently struggle with temporal consistency, often outputting sequences with long periods of stagnation followed by jarring, abrupt transformations.

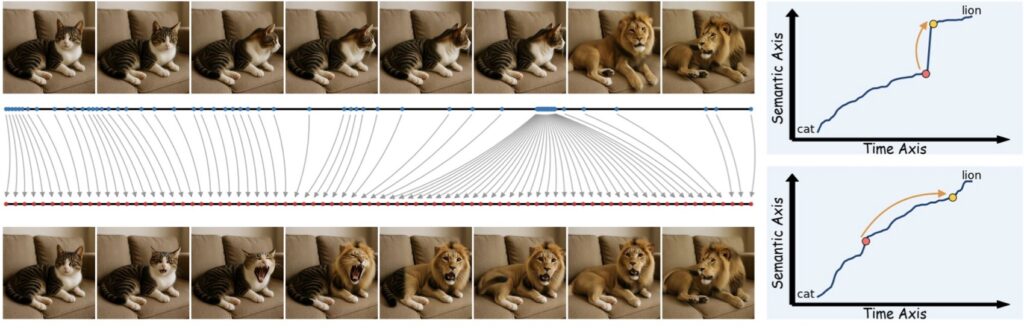

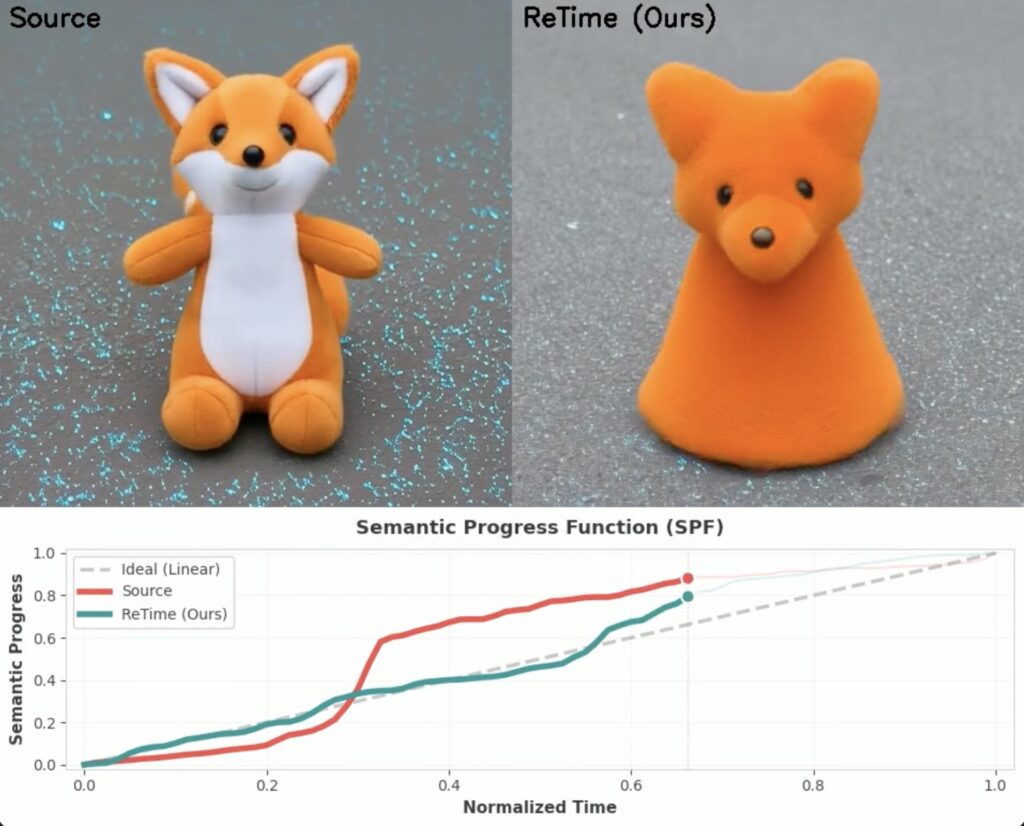

- A New Diagnostic Yardstick: The “Semantic Progress Function” (SPF) maps a video’s evolution into a one-dimensional curve, accurately measuring the rate of meaningful change across frames.

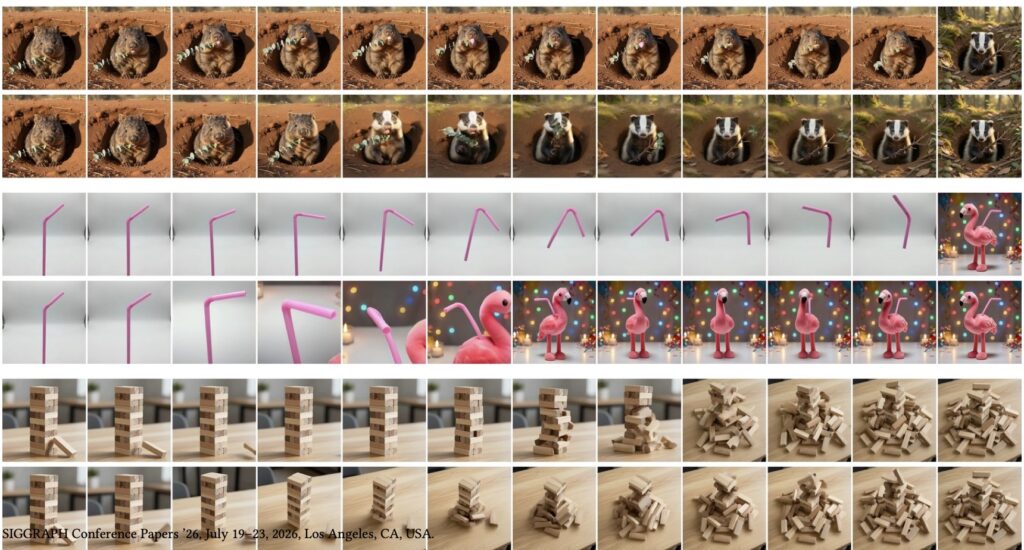

- Smooth Sailing: By using SPF to “linearize” semantic progress, developers can retime videos to unfold at a constant, predictable rate—both during generation and post-production—without retraining their models.

The generative AI revolution is rapidly transforming visual media. Today, generative models are tasked with producing complex image and video sequences meant to depict gradual transformations, such as cinematic morphs, style edits, object evolution, and dramatic product reveals. These first-to-last frame generations are not just academic novelties; they are actively deployed in commercial tools and utilized for artistic visual effects (VFX) and seamless looping videos. However, creators frequently encounter a frustrating roadblock: artificial intelligence has a terrible sense of pacing.

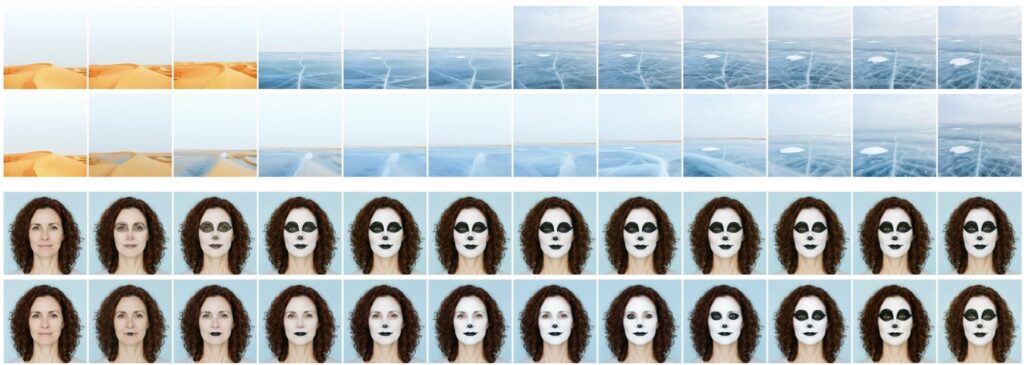

These generated sequences often change in meaning highly unevenly. Viewers might endure long stretches where the visual content barely shifts, only to be hit with a sudden, abrupt jump where the transformation violently “catches up” to where it needs to be. This non-linear semantic evolution is more than just a visual annoyance; it fundamentally undermines the perceptual coherence of the video, drastically reduces a creator’s ability to control the output, and makes downstream editing a nightmare. While prior research has attempted to address basic temporal smoothness or latent-space interpolation, the industry has lacked a principled measure to quantify how the actual meaning (the semantic content) evolves over a sequence. We have lacked a tool to diagnose where these abrupt shifts occur, let alone a way to compare the pacing across different AI generators.

To solve this, researchers have introduced a groundbreaking conceptual tool: the Semantic Progress Function (SPF). The SPF is designed to characterize exactly how meaning evolves over time within a video sequence. By reducing a complex visual transformation down to a simple, interpretable, one-dimensional trajectory, the SPF captures the cumulative semantic state of a sequence. To build this function, distances between the semantic embeddings of each frame are computed, and a smooth curve is fitted to reflect their sequential ordering. In a perfect, uniformly paced video, this curve would be a perfectly straight line. Therefore, any departures from that straight line instantly reveal uneven semantic pacing, pinpointing exactly where—and by how much—a sequence deviates from a smooth evolution.

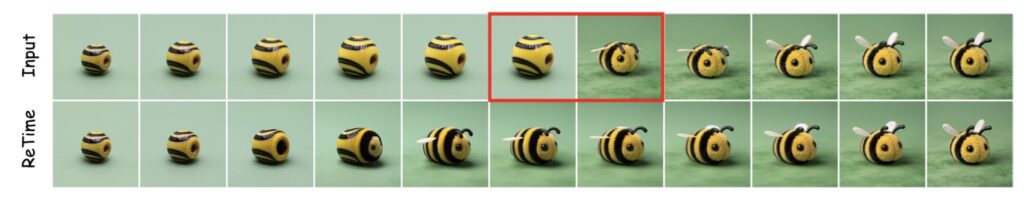

Identifying the problem is only half the battle; fixing it is where the SPF truly shines. Building on this diagnostic foundation, researchers proposed “semantic linearization.” This is a procedure that reparameterizes, or retimes, the sequence so that the semantic change unfolds at a constant, steady rate. The beauty of this framework lies in its flexibility. It offers two complementary methods for achieving this smooth evolution: direct intervention during the actual generation process (by temporally warping the AI’s positional embeddings) and post-hoc linearization of existing, already-rendered videos through segmented regeneration. Because this framework is model-agnostic, these techniques yield smoother, highly coherent transitions without the expensive need for model retraining or tedious manual frame annotation.

Of course, no analytical tool is without its hurdles. Because the current semantic analysis relies on frame-level embeddings, the SPF can occasionally be “tricked.” Rapid camera movements, dramatic lighting shifts, or large changes in appearance that aren’t tied to the core meaning of the scene can influence the embedding space. In these highly dynamic scenarios, the progress function might accidentally measure superficial perceptual changes rather than pure semantic evolution. Furthermore, the iterative refinement used to warp temporal embeddings can progressively push them away from the data they were originally trained on; push it too far with too many iterations, and the overall output quality may degrade.

Despite these limitations, the Semantic Progress Function opens up an exciting new frontier for video generation. Future developments aim to incorporate motion-aware embeddings to better handle dynamic scenes and to disentangle complex semantic factors—allowing creators to independently control pacing for identity, style, and geometry. Beyond directly fixing videos, the SPF serves as a foundational metric for the industry. It can be used to benchmark the temporal behavior of different generative models, automate video summarization by identifying keyframes, and generate smart thumbnails. Ultimately, by providing a way to generate uniformly paced morphs, this framework acts as a vital data-generation tool, paving the way for the next generation of highly controllable, perfectly paced AI video models.