From dominating high school puzzles to cracking decades-old conjectures, artificial intelligence has officially crossed the threshold from novelty to an indispensable research partner.

- A Rapid Evolution: After shocking experts by mastering the 2025 International Mathematical Olympiad, AI models quickly transitioned from solving known puzzles to tackling open, research-tier questions by early 2026.

- Accelerating Discovery: Mathematicians are now actively collaborating with Large Language Models (LLMs) to formulate conjectures, prove decades-old theorems, and uncover hidden geometric structures that have gone unnoticed for half a century.

- A Cultural Crossroads: While AI is unlikely to fully replace human mathematicians, its rapid ascent poses an existential challenge to traditional mathematics education and threatens to flood academia with AI-generated errors unless countered by autoformalization.

The tipping point arrived in the summer of 2025. When artificial intelligence models successfully solved five out of six problems at the International Mathematical Olympiad, the mathematical community was forced to pay attention. Historically, Olympiad problems—while notoriously difficult—are essentially closed-loop puzzles with known answers. But the sheer speed of AI’s improvement prompted researchers to test these models against the unknown.

What they found permanently altered the landscape of the discipline. By early 2026, mathematicians weren’t just playing with algorithms; they were using them to break genuinely new ground. “2025 was the year when AI really started being useful for many different tasks,” noted Fields Medalist Terence Tao of UCLA. Today, algorithms are formulating conjectures, drafting proofs, and enabling researchers to accomplish in hours what used to take months.

From glorified search engines to conversational partners

Prior to 2025, AI’s primary utility in mathematics was essentially as a high-powered search engine—resurfacing forgotten proofs or connecting disparate literature better than traditional semantic searches. But as models grew more sophisticated, they evolved into viable, if eccentric, conversation partners.

Researchers discovered that while LLMs frequently made basic mathematical errors, they were simultaneously capable of generating subtle, highly original ideas. Ernest Ryu, an applied mathematician at UCLA specializing in optimization theory, experienced this firsthand when he set out to solve a 42-year-old open problem proposed by Russian mathematician Yurii Nesterov in 1983. The problem involved proving whether a specific gradient descent algorithm would consistently converge on an optimal value without overshooting.

Ryu engaged ChatGPT in a rapid-fire iterative process. “It kept giving me incorrect proofs,” Ryu explained, but the errors contained “correct partial results that seemed potentially useful.” Acting as a human verifier, Ryu fed the good parts back into the model. Within three days and about 12 hours of active work, he had proved that Nesterov’s method converges—a breakthrough that led to Ryu joining OpenAI shortly after.

Uncovering hidden architecture

AI is not just verifying human intuition; it is highlighting mathematical structures that humans simply missed.

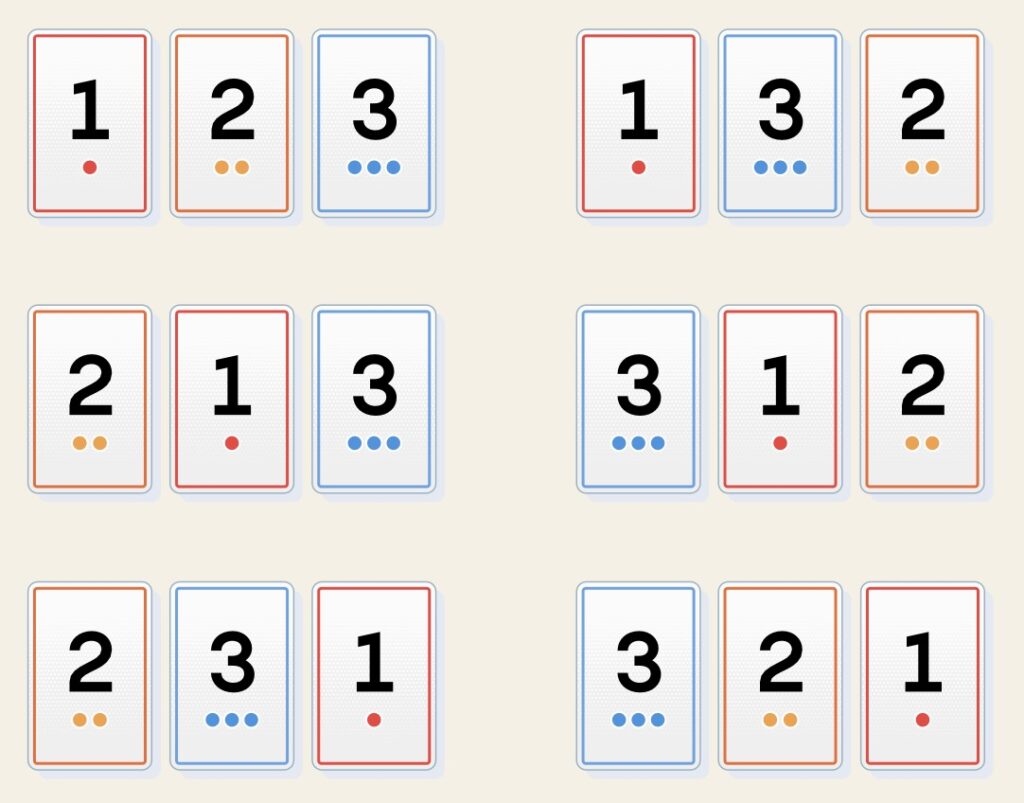

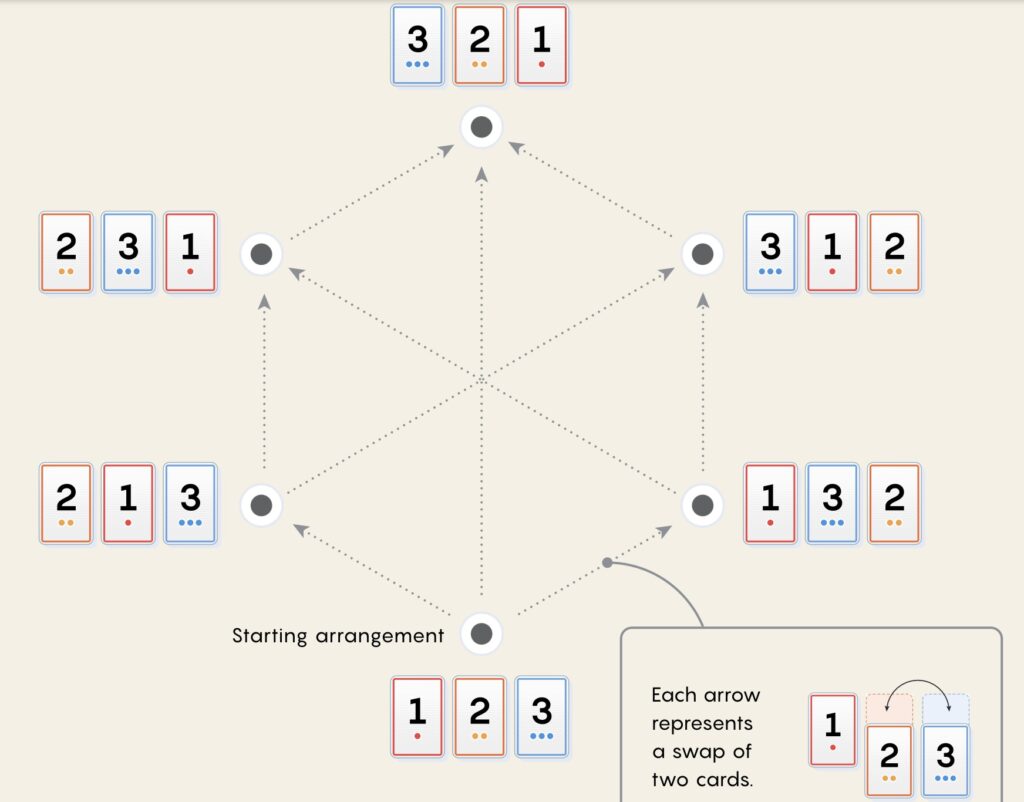

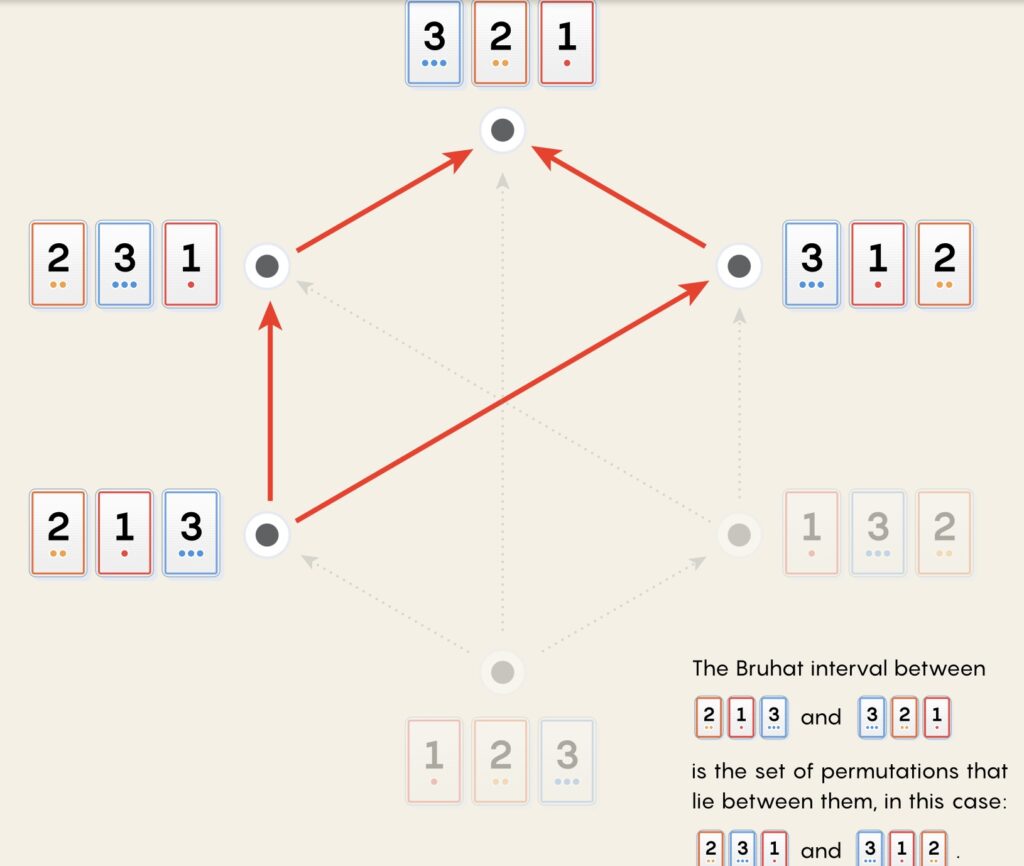

In late 2025, a global team of mathematicians, including Geordie Williamson and Jordan Ellenberg, used DeepMind’s AlphaEvolve to analyze “Bruhat intervals”—highly complex structures related to the permutation group (the mathematical description of how items can be shuffled).

Left to run overnight, the AI engaged in bizarre computational strategies it referred to as a “Crazy Ivan” maneuver. It ultimately generated 50 lines of Python code that revealed a stunning reality: the Bruhat intervals in specific permutation groups formed higher-dimensional cubes, or hypercubes.

“It’s a structure that’s been sitting there for 50 years in front of our nose. We just hadn’t noticed it,” Williamson said. Unlike older machine learning methods that required deep coding expertise, modern AI allowed the team to execute these exploratory experiments in 20 minutes.

Similarly, Stanford’s Ravi Vakil utilized AI to explore algebraic geometry, specifically how spheres embed in spaces known as flag varieties. By collaborating with an AI to prove a simpler case of their problem, the model produced an argument so elegant and clear that it illuminated the path to solving the general, infinite case.

The ocean of slop and the death of homework

Despite these triumphs, the mathematical community is bracing for the fallout. AI’s tendency to hallucinate has triggered fears of an “ocean of slop” overwhelming academic journals.

To combat this, the field is turning to formal proofs—translating math into strict code that computers can systematically verify. Because manually translating math into code is painstakingly slow, researchers are hoping for “autoformalization,” where AI models both translate and verify the logic simultaneously, effectively acting as their own guardrails.

Perhaps the most immediate crisis, however, is pedagogical. The very tools accelerating professional research are short-circuiting student development. “Many of the problems we assign, AI can solve instantly,” Tao warned. “This can discourage a lot of the students from building up their mental muscles.” Professors are abandoning traditional homework entirely in favor of in-class, supervised work, fearing that AI’s shortcuts will prevent the current generation of students from ever becoming the next generation of researchers.

Jumping robots and the Everest of math

No one expects AI to render human mathematicians obsolete anytime soon. Terence Tao likens the current state of AI to “jumping robots” attempting to navigate a vast mountain range. While a robot might parkour its way up a six-foot wall that stumps a human, it lacks the long-term strategic vision required to summit Mount Everest. Deep, structural mysteries—like the fractional properties of transcendental numbers—will likely remain exclusively human domains for centuries.

Furthermore, mathematics is not purely computational; it is an art form driven by human aesthetics. As Fields Medalist Akshay Venkatesh points out, there are infinitely many ways to formulate math, and those choices are governed by human values.

At the largest annual mathematics conference in Washington, D.C. in early 2026, the mood was a mix of awe and existential unease. Addressing the crowd, Geordie Williamson urged his peers not to react with ignorance or fear, while acknowledging the heavy reality of the moment: AI has irrevocably altered a craft people have dedicated their lives to mastering. The silicon chalkboard is here, and the equations being written on it belong to both man and machine.