Anthropic researcher Nicholas Carlini reveals how the latest language models are transforming cybersecurity by finding complex, decades-old vulnerabilities with minimal human oversight.

- Autonomous Discovery: AI agent Claude Code successfully identified multiple remotely exploitable vulnerabilities in the Linux kernel, including a critical flaw that sat undiscovered for 23 years.

- Beyond Pattern Recognition: The AI demonstrated an ability to understand intricate details of complex systems, such as the Network File Share (NFS) protocol, even generating its own ASCII diagrams to explain the exploit.

- The New Bottleneck: The rapid advancement of models like Claude Opus 4.6 has shifted the cybersecurity bottleneck from finding bugs to human validation, leaving researchers with hundreds of unverified crashes.

At the [un]prompted 2026 AI security conference, the cybersecurity world received a stark wake-up call regarding the rapidly evolving capabilities of artificial intelligence. Nicholas Carlini, a research scientist at Anthropic, took the stage to report a monumental achievement: he had successfully used Claude Code to uncover multiple remotely exploitable security vulnerabilities hidden deep within the Linux kernel. Astonishingly, one of these critical flaws had sat entirely undiscovered by human developers and traditional security tools for 23 years. Carlini himself was completely taken aback by the sheer effectiveness of the AI, noting that the model easily found multiple remotely exploitable heap buffer overflows—a notoriously difficult class of bugs to track down. As Carlini admitted to the audience, he had never found one of these in his life before, emphasizing that while this task is incredibly difficult for humans, these new language models are pulling them up in bunches.

What makes this breakthrough particularly surprising is the minimal amount of oversight and hand-holding the AI required. Carlini did not spend weeks crafting highly specific parameters; instead, he essentially pointed Claude Code directly at the sprawling Linux kernel source code and asked it where the security vulnerabilities were hiding. To frame the task, the script simply told Claude Code that the user was participating in a “capture the flag” cybersecurity competition and needed help solving a puzzle. To ensure the model cast a wide net and did not get stuck reporting the same vulnerability over and over, Carlini designed a script that looped over every single source file in the kernel. The script methodically directed Claude’s attention, telling it the bug was probably in file A, then moving to file B, and so on, until the AI had scrutinized every corner of the codebase.

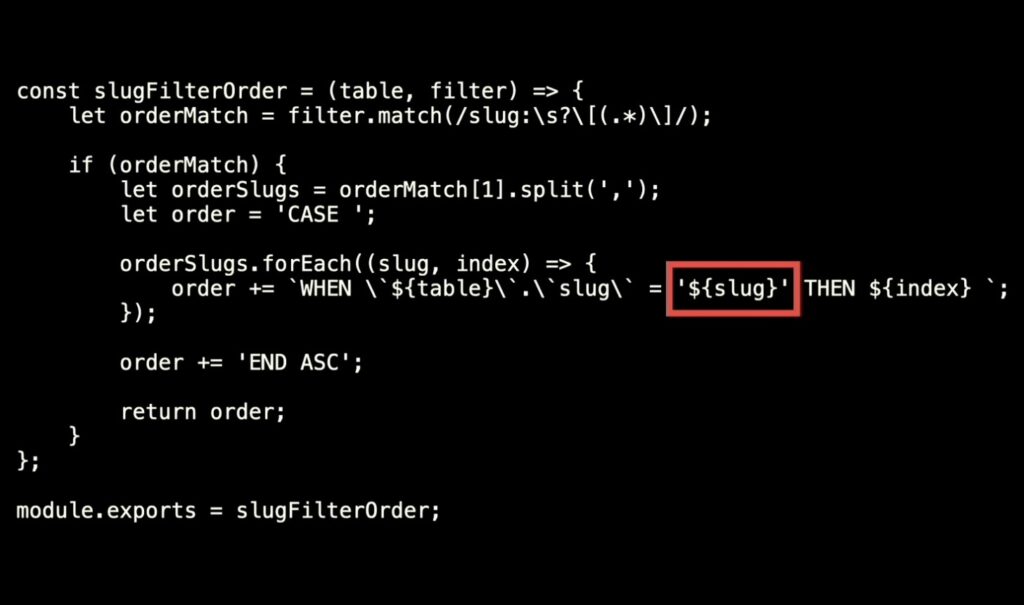

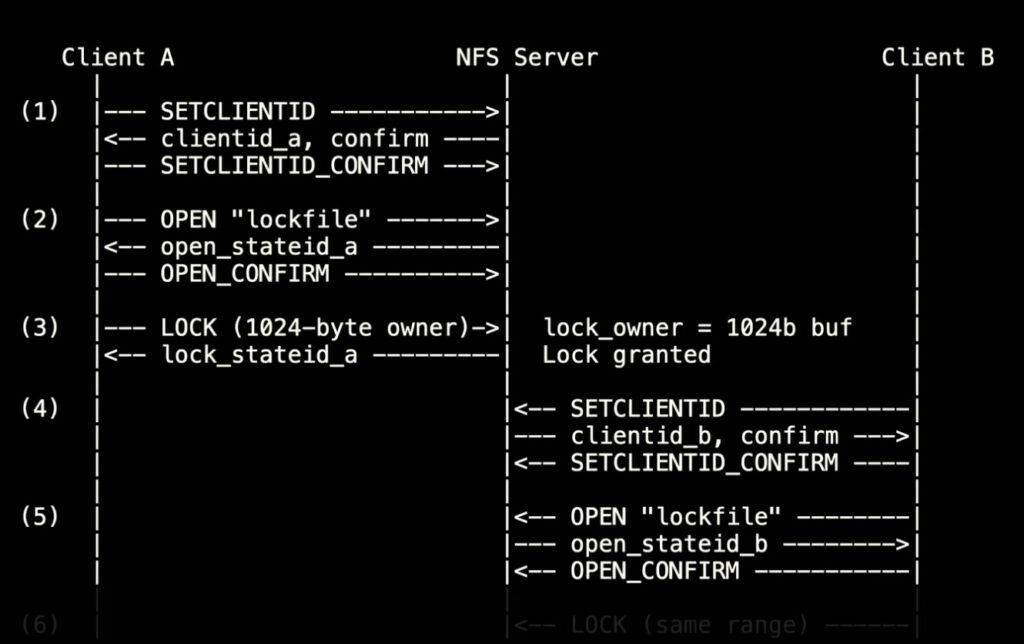

During his presentation, Carlini highlighted one specific bug found in Linux’s Network File Share (NFS) driver to demonstrate the depth of the AI’s comprehension. This vulnerability, which allows an attacker to read sensitive kernel memory over a network, proved that Claude Code was not simply hunting for obvious bugs or regurgitating common coding anti-patterns. Instead, uncovering this flaw required the AI to deeply understand the intricate, step-by-step mechanics of how the NFS protocol operates. The vulnerability triggers when the NFS server attempts to generate a response denying a lock request to a client. For this response, the system allocates a memory buffer of only 112 bytes. However, the denial message must include the owner ID, which can be up to 1024 bytes long, bringing the total size of the message to 1056 bytes. Because the kernel forcefully writes 1056 bytes into a 112-byte buffer, an attacker can intentionally overwrite kernel memory using bytes they control within the owner ID field. In a fascinating display of its reasoning, Claude Code even generated detailed ASCII protocol diagrams as part of its initial bug report to illustrate exactly how the exploit functioned.

While this represents a massive leap forward for defensive cybersecurity, it has introduced an entirely unexpected problem: there are now far more bugs than humans can reasonably process. Carlini revealed that he has used the AI to find hundreds of additional potential bugs in the Linux kernel. However, the new bottleneck in the cybersecurity pipeline is the painstakingly manual step of humans sorting through and verifying all of Claude’s findings. Because Carlini refuses to flood the Linux kernel maintainers with unverified “slop,” he is sitting on several hundred system crashes that remain unseen by developers simply because he lacks the time to manually validate them.

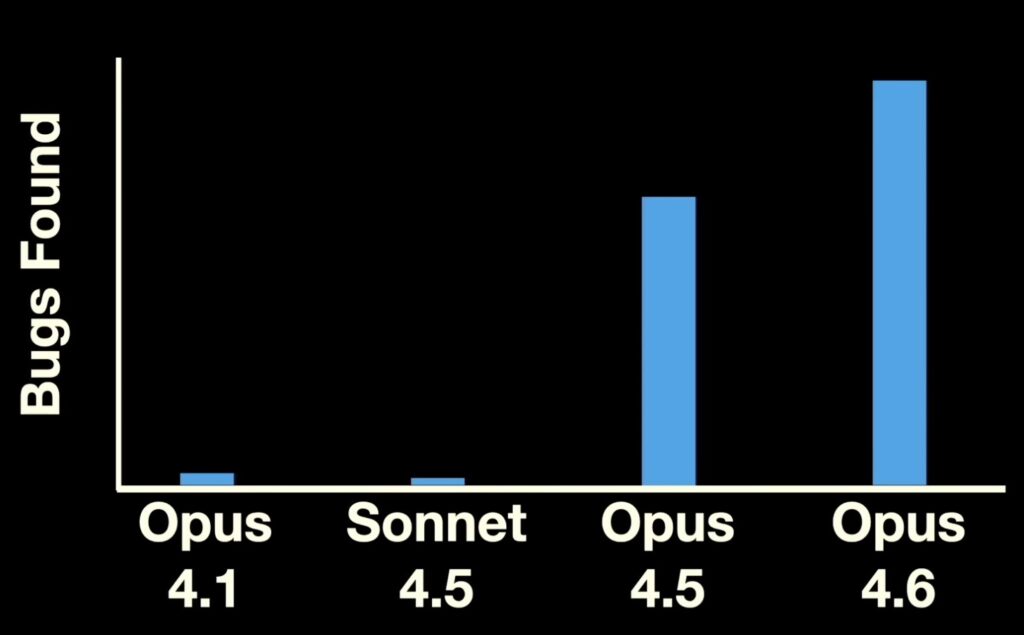

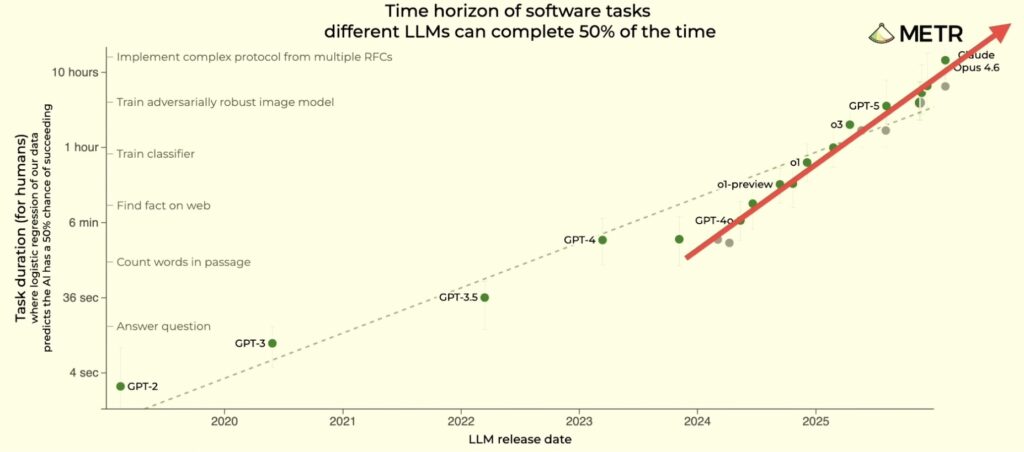

Carlini’s talk signaled a massive, incoming wave for the software industry, driven by the dizzying pace at which large language models are improving at vulnerability discovery. To contextualize this leap, Carlini explained that he achieved these unprecedented results using Claude Opus 4.6, a model Anthropic had released less than two months prior to the conference. When he attempted to reproduce the exact same results on older models, the drop-off was severe. Claude Opus 4.1, released just eight months ago, and Sonnet 4.5, released six months ago, could only uncover a tiny fraction of the vulnerabilities that Opus 4.6 found. This stark contrast paints a clear picture: AI is no longer just assisting in code review; it is fundamentally rewriting the rules of vulnerability research, uncovering the digital ghosts of decades past at a speed human researchers can scarcely comprehend.