Despite massive tech layoffs and booming investments, the math behind artificial intelligence reveals a surprising truth: right now, human labor is still the cheaper option.

- The AI Cost Illusion: While tech giants are shedding jobs by the tens of thousands, running advanced AI models remains vastly more expensive than paying human employees due to immense computing and energy demands.

- A Budget-Busting Boom: Big Tech capital expenditures on AI have skyrocketed to an astonishing $740 billion in 2026, blowing through corporate budgets without clear evidence of proportional productivity gains.

- The Tipping Point is Tomorrow, Not Today: A true shift toward AI replacing human labor relies on a future drop in inference costs, the stabilization of pricing models, and improved technological reliability, reducing the current need for human oversight.

Recent headlines emerging from the technology sector paint a picture of a massive labor shift, leading many to believe that the highly anticipated replacement of human workers by artificial intelligence is already well underway. Meta announced last week in an internal memo that it plans to lay off 10% of its workforce—about 8,000 employees—while simultaneously scrapping plans to hire for 6,000 open positions. The company framed this as an effort to run more efficiently and offset new technological investments. Similarly, Microsoft has just offered thousands of its employees a voluntary buyout, marking the largest workforce reduction initiative the company has ever executed. Alongside data from Layoffs.fyi tracking more than 92,000 tech layoffs in 2026 across nearly 100 companies—a pace already dwarfing the 120,000 total layoffs seen last year—it is easy to assume that AI is outcompeting humans on the balance sheet. However, a closer look at the economics of the tech industry reveals a stunning paradox: right now, AI is actually costing companies far more than the humans they currently employ.

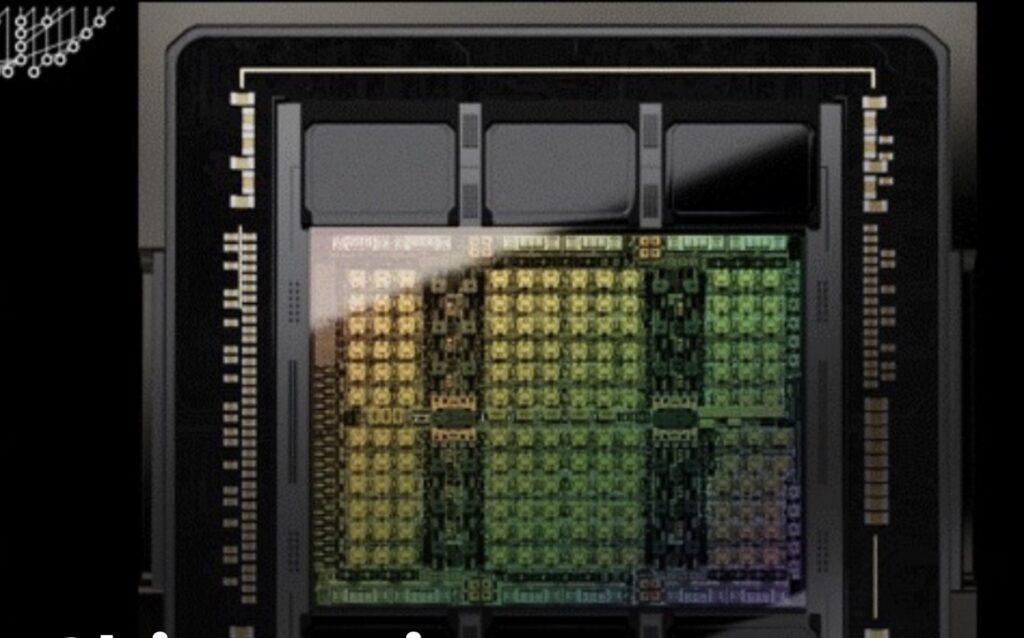

The reality of the server room tells a different story than the boardroom’s futuristic ambitions. “For my team, the cost of compute is far beyond the costs of the employees,” Bryan Catanzaro, vice president of applied deep learning at Nvidia, recently noted to Axios. This hands-on experience is strongly supported by academic research. A 2024 MIT study analyzing the technical requirements of AI models designed to perform jobs at a human level found that AI automation is economically viable in only 23% of roles where vision is a primary component of the work. For the remaining 77% of tasks, it remains decidedly cheaper for humans to simply continue doing their jobs. Furthermore, the technology is still highly fallible, requiring expensive human intervention when things go wrong; in one notable instance, an engineer reported that an overly active AI agent completely destroyed his network and database as a result of “overuse.”

Despite the Yale Budget Lab reporting no widespread data to support the idea of AI displacing jobs, and a general lack of clear evidence that AI is universally improving productivity, Big Tech is trapped in a spending arms race. According to Morgan Stanley, tech firms have announced $740 billion in capital expenditures so far this year, representing a staggering 69% increase from 2025. This magnitude of spending is actively disrupting corporate finance. Praveen Neppalli Naga, Uber’s chief technology officer, recently admitted to The Information that he was sent back to the drawing board because the budget he allocated for the rideshare giant’s pivot to AI coding tools, such as Anthropic’s Claude Code, was already blown away.

This continued avalanche of AI spending paired with aggressive workforce reductions exposes a deep discrepancy in the current economics of artificial intelligence. Keith Lee, an AI and finance professor at the Swiss Institute of Artificial Intelligence’s Gordon School of Business, characterizes this as a “short-term mismatch.” According to Lee, the cost of utilizing AI has remained highly inefficient compared to human labor primarily due to the intense hardware and energy requirements driving up operating costs for providers. The broader financial projections reflect this burden: McKinsey data suggests AI expenditures could reach $5.2 trillion by 2030—with $1.6 trillion dedicated to data centers and $3.3 trillion to IT equipment—and could surge to as much as $7.9 trillion at an accelerated pace.

Meanwhile, the software side of the equation is also becoming more expensive for end-users. Spending management firm Tropic noted late last year that fees for AI software have jumped by 20% to 37%. AI companies are also grappling with their own financial leaks; flat subscription models often fail to cover the immense operating costs generated by heavy, power-user clients. Because of these compounding costs, Lee points out that many firms are beginning to reevaluate artificial intelligence. Instead of viewing it as a clear, cost-saving substitute for human labor, they are treating it as a complementary tool, at least until the underlying cost structures stabilize.

While artificial intelligence carries a premium price tag today, industry analysts are closely watching for the warning signs of a tipping point toward true economic viability. The first major shift will need to be a drastic reduction in computational costs. According to a recent Gartner report, the cost of performing inference—the process by which an AI analyzes data—for a large language model boasting 1 trillion parameters is expected to plummet by more than 90% over the next four years. As AI infrastructure improves and the supply of specialized hardware catches up to demand, AI companies are also likely to abandon flat subscriptions in favor of usage-based pricing models.

The future of AI’s economic dominance depends on the technology unequivocally proving its worth in the trenches of daily enterprise. Federal Reserve data indicates that about 18% of companies had adopted AI tools by the end of 2025, marking a 68% growth in adoption since September of that year. Yet, to truly replace human capital, these tools must demonstrate ironclad reliability, exhibiting fewer hallucinations and drastically reducing the need for human oversight while seamlessly integrating into existing corporate infrastructure. As Professor Lee succinctly summarized, the true revolution will arrive when AI becomes not just cheaper than humans, but “both cheaper and more predictable at scale.”