Ethical Safeguards and Human Control Are Essential, Says the Pontiff

- Pope Francis compares AI to historical tools of both improvement and destruction, emphasizing the dual nature of technological advances.

- He warns that unchecked AI development could exacerbate societal inequalities and dehumanize vulnerable populations.

- The pontiff calls for ethical barriers and proper human control to preserve human dignity and decision-making.

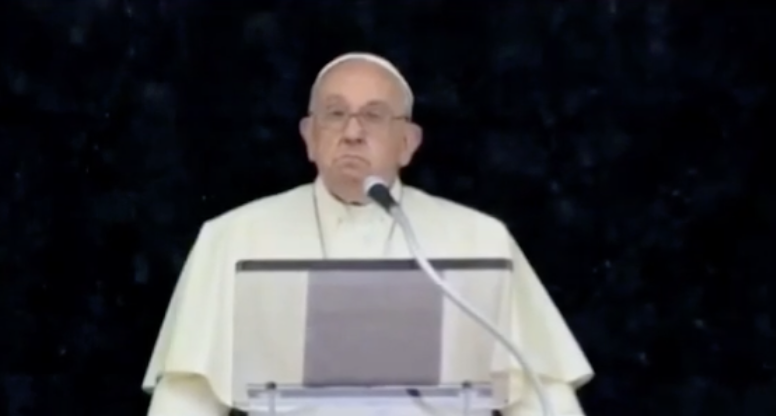

In a historic first, Pope Francis addressed the G-7 conference on Friday, delivering a compelling speech on the ethical implications of artificial intelligence (AI). Speaking to world leaders in Fasano, Italy, the pontiff underscored both the potential benefits and the significant risks associated with AI technology.

Pope Francis began by highlighting the dual emotions AI evokes in society: excitement for its possibilities and fear of its consequences. He likened AI to primitive flint knives and nuclear energy—technological advancements that have offered both self-improvement and avenues for violence. “The question of artificial intelligence, however, is often perceived as ambiguous: on the one hand, it generates excitement for the possibilities it offers, while on the other, it gives rise to fear for the consequences it foreshadows,” he said.

The Dual Nature of AI

The pope’s comparison of AI to historical tools served as a reminder that technological advancements always come with inherent risks. While AI holds the promise of immense benefits, it also poses a threat of dehumanization, particularly for vulnerable societies. He stressed that without ethical guidelines, the pursuit of AI technology could worsen the “throwaway culture” and marginalize those unable to resist technocratic dominance due to poverty or technological illiteracy.

“Due to its radical freedom, humanity has not infrequently corrupted the purposes of its being, turning into an enemy of itself and of the planet,” Pope Francis warned. “The same fate may befall technological tools.”

Risks to Human Dignity

Pope Francis expressed particular concern about AI’s impact on essential aspects of society, such as children’s education, the criminal justice system, and warfare. He warned that allowing AI to make decisions in these areas could strip away human dignity and the ability to make autonomous decisions.

“We would condemn humanity to a future without hope if we took away people’s ability to make decisions about themselves and their lives, by dooming them to depend on the choices of machines,” he stated. The pontiff emphasized the need for maintaining a space for human control over AI, arguing that human dignity itself depends on it.

Call for Ethical Reform

The pope’s address called for fundamental reform and major renewal in how AI is developed and implemented. He urged for a healthy political approach involving diverse sectors and skills to oversee this process. “Much needs to change, through fundamental reform and major renewal. Only a healthy politics, involving the most diverse sectors and skills, is capable of overseeing this process,” he said.

Pope Francis has consistently voiced skepticism about AI since it gained widespread attention. In December 2023, he speculated that global “technocratic systems” could exploit AI efficiencies without considering the broader impacts on the poor, ultimately sacrificing humanity for efficiency.

Pope Francis’s address to the G-7 leaders serves as a crucial reminder of the ethical considerations that must accompany the development and deployment of AI technologies. His call for safeguards and proper human control aims to ensure that AI enhances human life without compromising dignity or increasing societal inequalities. As AI continues to evolve, the pontiff’s message underscores the importance of a balanced approach that prioritizes ethical standards alongside technological advancement.