Meta: Drawing lessons from cognitive science to build autonomous, self-teaching artificial intelligence

- The MLOps Bottleneck: Unlike biological organisms, current AI models do not learn autonomously; they rely on static, human-driven pipelines and stop learning entirely once deployed.

- The Missing Biological Links: For AI to achieve true autonomy, it must develop three capabilities found across the animal kingdom: active learning (selecting its own data), meta-control (switching learning modes), and meta-cognition (evaluating its own performance).

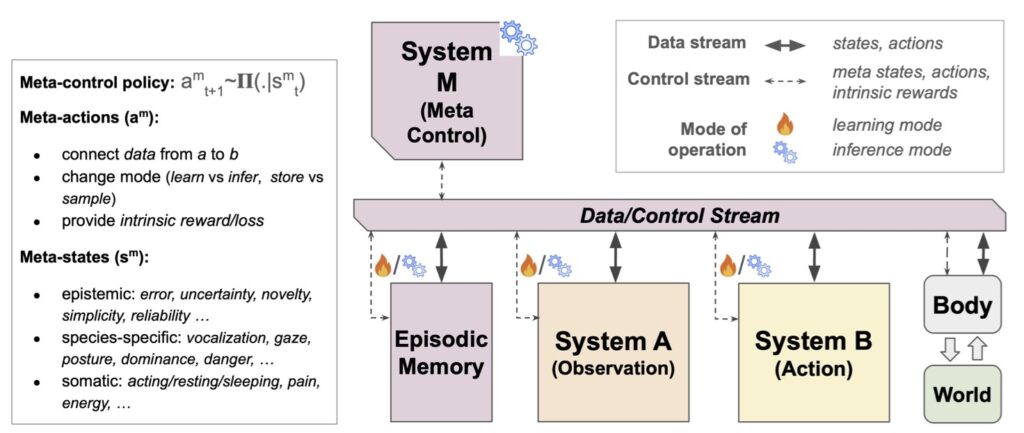

- The A-B-M Architecture: A new framework inspired by cognitive science proposes merging learning from observation (System A) and active behavior (System B), orchestrated by an internal meta-control signal (System M) to automate the learning process from within.

Both Artificial Intelligence and Cognitive Science were born in the intellectual ferment of the 1950s. In the post-war era, pioneers began weaving together threads of neural modeling, computation, information theory, and control. Initially, their objectives diverged significantly. AI researchers focused on the pragmatic goal of creating intelligent machines, while cognitive scientists sought a foundational, scientific understanding of brains and behavior. Over the decades, the trajectories of these two fields have overlapped with varying degrees of cross-fertilization. Today, the undeniable successes of Deep Learning have ushered in a golden era of cross-disciplinary interaction. As AI models successfully tackle high-level human abilities—such as natural language processing, visual understanding, and complex reasoning—they increasingly incorporate concepts and evaluation methods drawn directly from the cognitive and neural sciences. In return, AI systems are providing cognitive science with sorely needed quantitative theories of processes that can now be rigorously tested against empirical data.

Yet, amidst this highly productive convergence, a glaring paradox remains. Given the immense power and widespread importance of deep learning, one fundamental component of human intelligence remains frustratingly out of reach for current AI models: the simple ability to learn the way humans do.

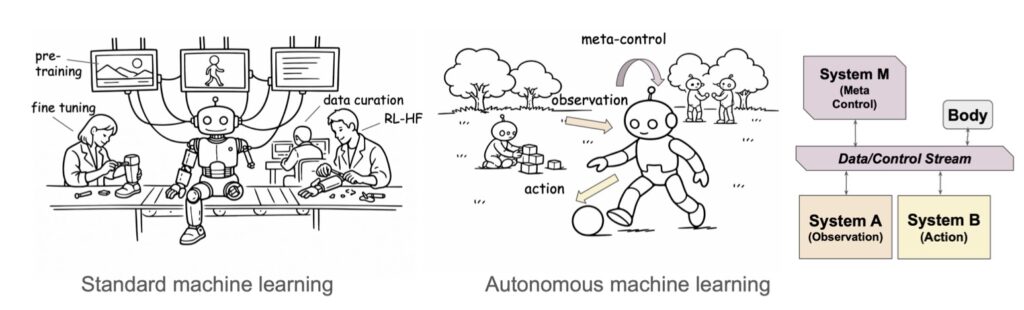

Contrary to animals, which constantly adapt to their surroundings, current AI systems do not learn autonomously. Their “learning” is an illusion tightly restricted to an off-line Machine Learning Operations (MLOps) pipeline. This pipeline requires a massive team of human experts who meticulously prepare the data, construct the training recipes, and continuously adjust parameters based on performance metrics. Once an AI model is deployed into the real world, its learning process freezes. Any subsequent adaptation to specific, real-world use cases places the burden entirely on the end-user through clever prompting or computationally expensive fine-tuning. The machine itself is stagnant.

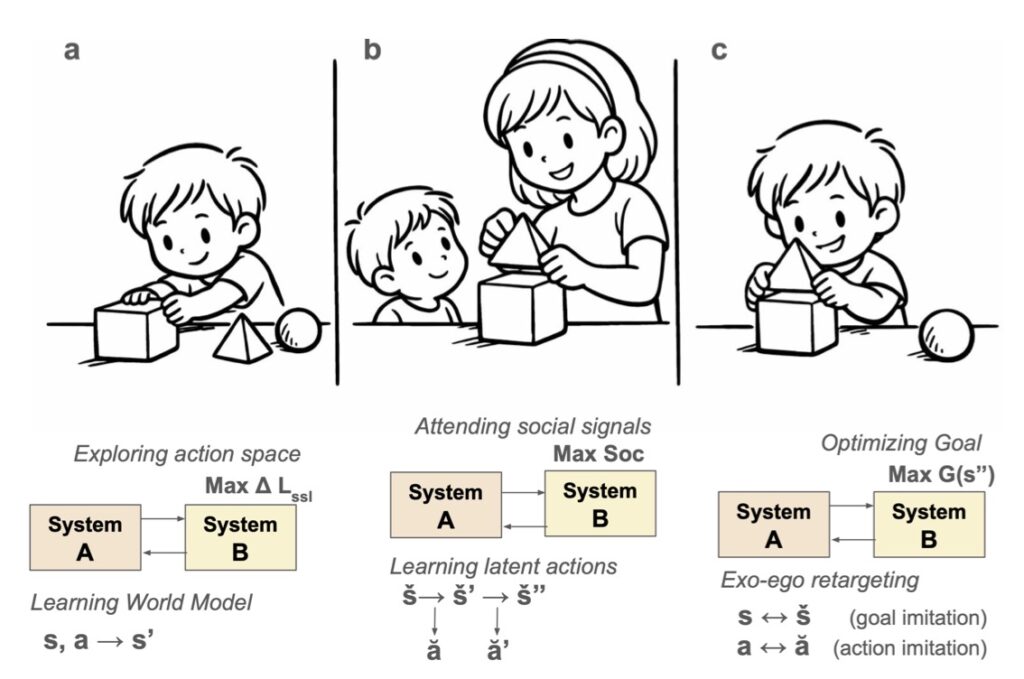

A deeper analysis of this limitation reveals that current AI systems lack three crucial abilities universally found across the animal kingdom. First, they lack active learning—the ability to autonomously seek out and select their own training data based on curiosity or environmental needs. Second, they lack meta-control, which is the flexibility to seamlessly switch between different learning modes depending on the context. Finally, they lack meta-cognition, the vital capacity to sense, evaluate, and reflect upon their own performance and knowledge gaps. Without these traits, AI remains a powerful calculator rather than a truly adaptive intelligence.

To unlock these missing biological abilities and build machines that actually learn, the AI community must overcome several significant roadblocks. First, we must merge AI techniques from currently isolated paradigms, specifically bridging the gap between self-supervised learning and reinforcement learning. Second, we must build a unified cognitive architecture that essentially internalizes and automates the human-driven MLOps pipeline.

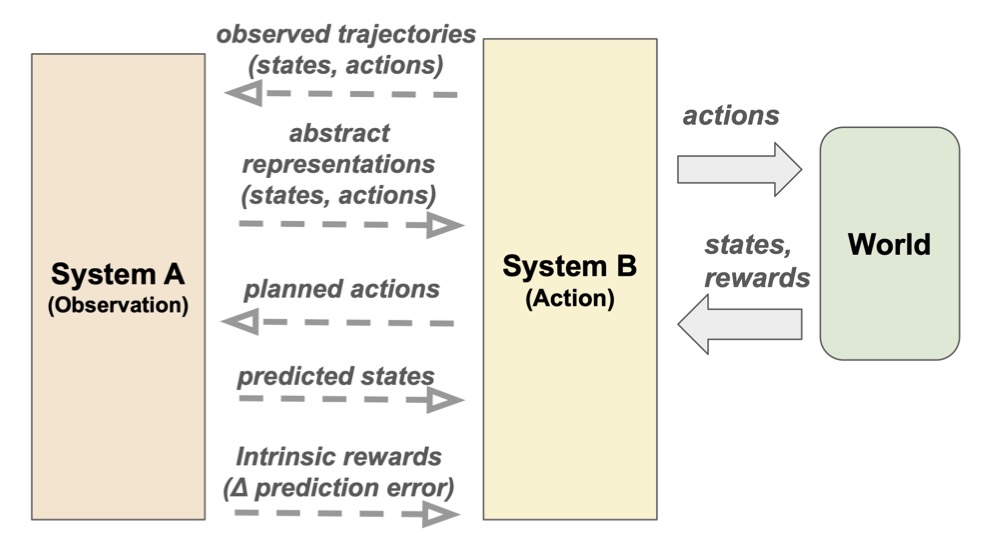

This leads to the proposition of the A-B-M architecture, a framework deeply inspired by human and animal cognition. This architecture integrates System A, which focuses on learning through passive observation, with System B, which drives learning through active behavior and interaction with the environment. Crucially, these two systems are orchestrated by System M—an internally generated meta-control signal that flexibly switches between observational and active learning modes based on the model’s self-assessed needs. By incorporating internal data routing, orchestration, and sensing components, the A-B-M model creates a self-sustaining learning loop.

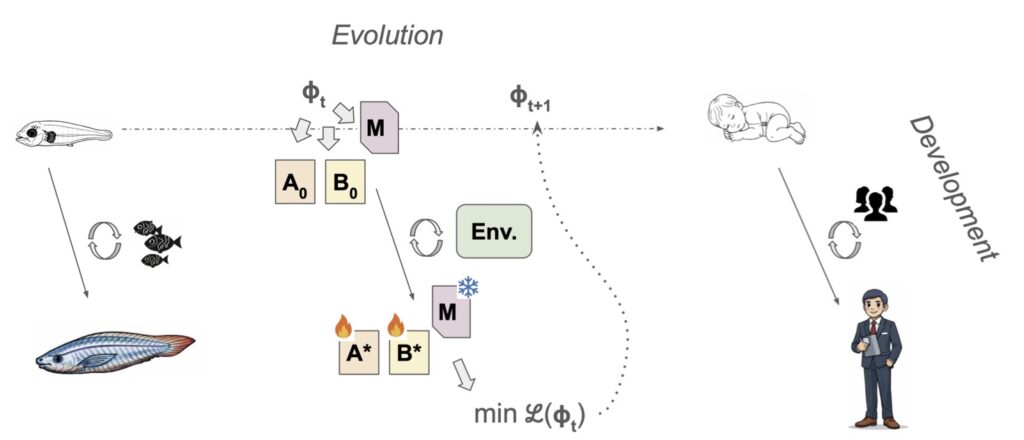

Realizing this vision requires a shift in how we train these models. We must build and refine these A, B, and M components jointly, utilizing evolutionary and developmental training schemes within complex, simulated environments. By taking inspiration from how biological organisms have adapted to dynamic, real-world environments across evolutionary timescales, we can finally bridge the gap between static machine learning and true, autonomous artificial intelligence.