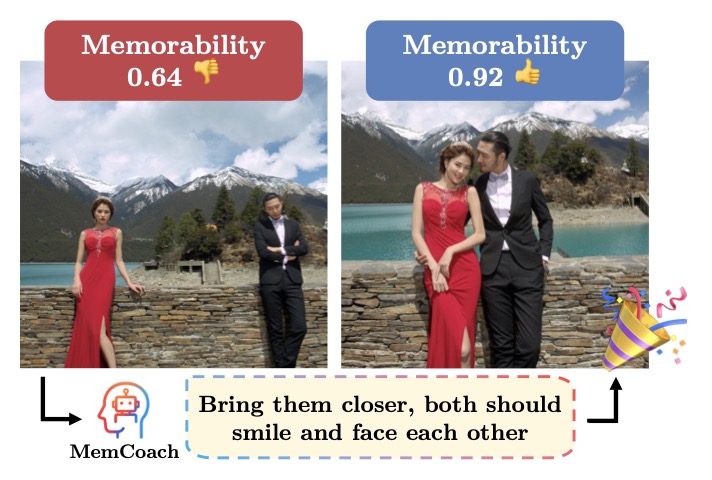

Moving beyond passive scoring, new research introduces MemCoach—an AI assistant that provides real-time, actionable advice to make your pictures visually striking and deeply memorable.

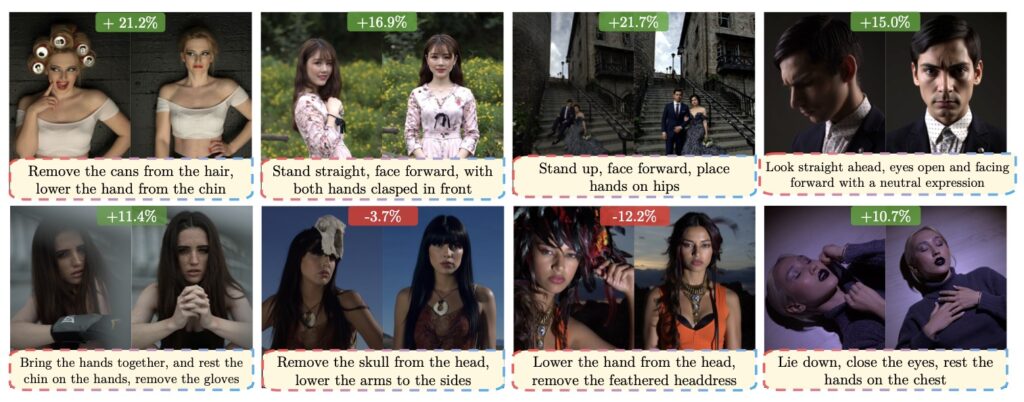

- A Paradigm Shift in Photography: The new Memorability Feedback (MemFeed) task moves AI from just predicting if an image is memorable to actually coaching users with natural-language tips at the moment of capture.

- Training-Free AI Coaching: MemCoach uses a novel teacher-student activation steering strategy to guide Multimodal Large Language Models (MLLMs), producing actionable feedback without needing intensive retraining.

- Rigorous Testing with MemBench: A newly released benchmark, MemBench, proves this method works consistently across top open-source models, opening the door for future interactive visual guidance systems.

For years, computer vision has treated “image memorability”—the likelihood that a photo will stick in a viewer’s mind—as a passive prediction task. AI models would analyze a picture and output a simple scalar score, or generative tools would artificially alter an image’s visual input after the fact to boost its impact. However, none of these paradigms actually help the human behind the lens. When you are about to snap a photo, a mere score isn’t helpful; what you need is concrete advice on how to compose the shot better.

To bridge this gap, researchers have formalized a groundbreaking new task called Memorability Feedback (MemFeed). Instead of passively judging, an automated model is now tasked with providing actionable, human-interpretable guidance—like “emphasize the facial expression” or “bring the subject forward”—with the specific goal of enhancing an image’s future recall.

Introducing MemCoach: Your Real-Time Photography Mentor

Stepping up to this new challenge is MemCoach, the first approach specifically designed to provide concrete, natural-language suggestions to improve memorability. Built on the backs of Multimodal Large Language Models (MLLMs), MemCoach represents a massive conceptual shift. It proves that memorability isn’t just a static property to be estimated; it is a creative skill that can be taught and instructed through interactive visual coaching.

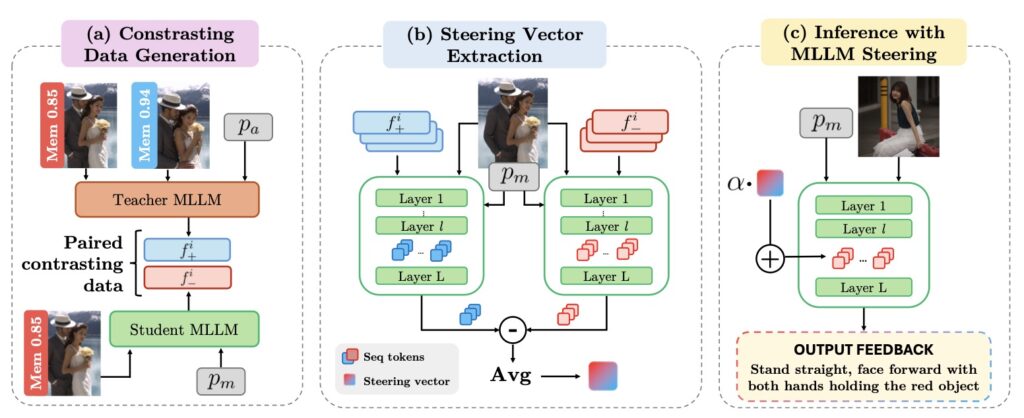

What makes MemCoach particularly powerful is its efficiency. It is entirely training-free, meaning it doesn’t require massive amounts of computing power to learn from scratch. Instead, it employs a clever contrastive activation steering strategy that injects memorability-aware behavior directly into the model.

The Three Stages of Activation Steering

MemCoach achieves this human-aligned coaching by distilling knowledge from a highly capable “teacher” model into a more standard “student” model. The framework operates in three main stages:

- Contrasting Data Generation: The system builds paired samples by looking at the same scene through two different lenses. It captures the insightful, memorability-aware guidance of an advanced teacher MLLM (which progresses along least-to-most memorable samples) and pairs it with the neutral, standard responses of a student MLLM.

- Steering Vector Extraction: The system then calculates the activation differences between the teacher’s highly effective feedback and the student’s neutral feedback. These differences are averaged to create a “memorability steering vector,” which captures the exact mathematical shift needed to produce better suggestions.

- Inference with MLLM Steering: During actual use, the student model’s internal activations are shifted using this memorability steering vector. Without any additional training, the student model suddenly produces significantly improved, memorability-oriented feedback.

Proving the Concept: MemBench and MLLM Testing

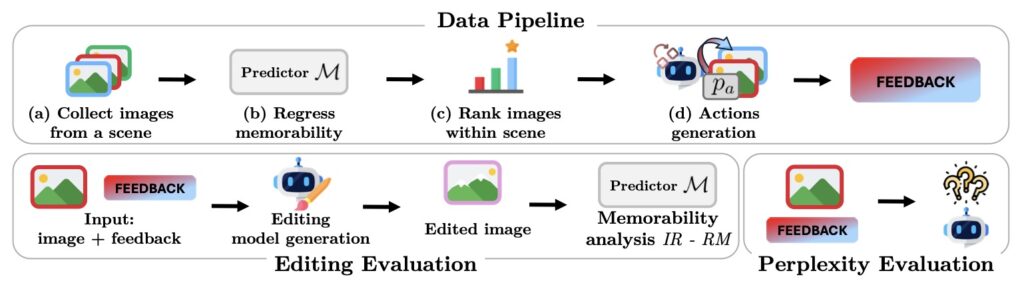

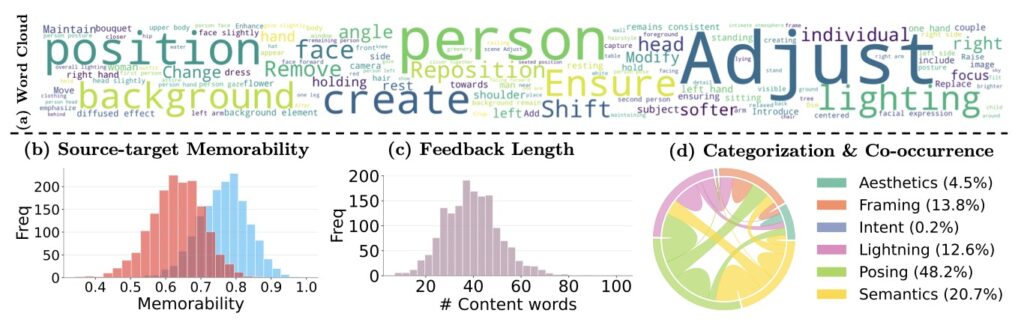

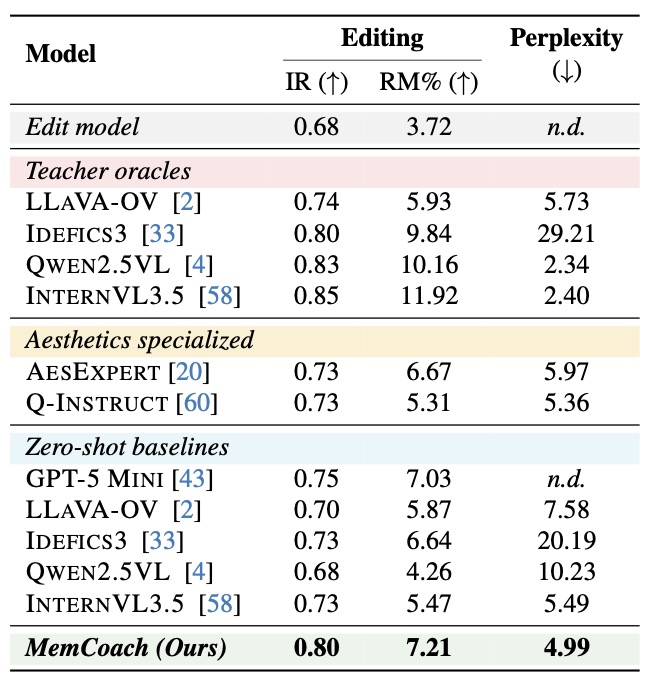

To foster future research and systematically evaluate this novel task, the researchers introduced MemBench, a new benchmark featuring sequence-aligned photoshoots complete with annotated memorability scores. Alongside the dataset, they established a rigorous new evaluation protocol that combines editing-based memorability improvement metrics with perplexity-based feedback alignment to truly assess the quality of the AI’s advice.

The experimental validation of MemCoach is highly promising. The researchers tested the framework across multiple open-source MLLMs—including InternVL3.5, Qwen2.5-VL, Idefics3, and LLaVA-OneVision. Across the board, steering these models toward memorability-aware activations yielded consistently improved performance and more human-aligned feedback than baseline zero-shot models, all while requiring minimal data.

MemCoach and the MemFeed task offer more than just a way to take better vacation photos. They suggest that activation steering is a highly efficient route to endow AI models with nuanced perceptual skills, paving the way for a future filled with interactive, explainable visual guidance systems that actively help us create.