Anthropic says Claude Mythos Preview can find serious software vulnerabilities at frontier scale. Project Glasswing is the attempt to keep that capability on the defensive side first.

- Anthropic announced Project Glasswing on April 7, 2026, with partners including AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks.

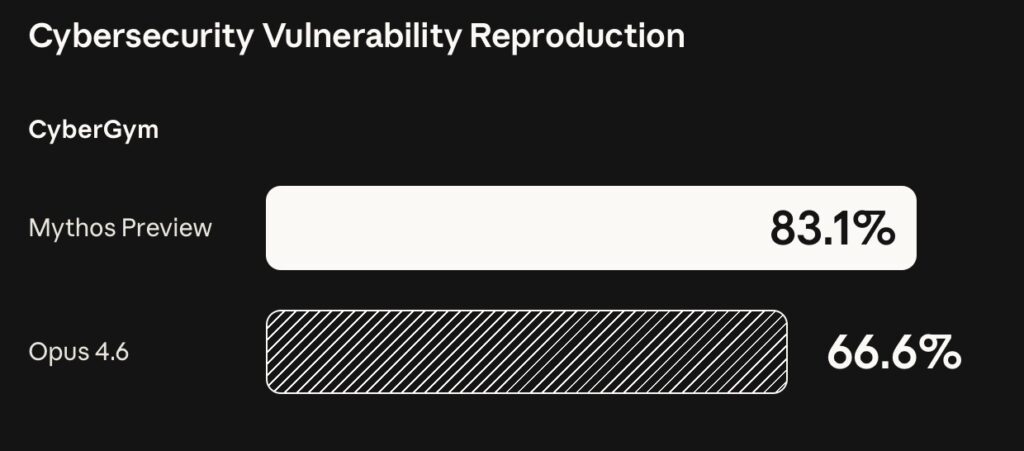

- The company says Claude Mythos Preview, an unreleased frontier model, found thousands of zero-day vulnerabilities and scored 83.1% on CyberGym compared with 66.6% for Claude Opus 4.6.

- The caution is central to the story: Mythos Preview is not generally available, open-source maintainer access has no public timeline in the source pack, and many vulnerability claims await independent verification until details are disclosed after patches.

Anthropic’s Project Glasswing is built around a hard cybersecurity paradox: the same AI capability that can find vulnerabilities at unusual speed could make attackers more dangerous if it spreads without controls. Anthropic’s answer is to give selected defenders early access to Claude Mythos Preview, an unreleased frontier model it says can find and exploit software vulnerabilities beyond all but the most skilled humans.

The April 7, 2026 announcement names a broad coalition: Amazon Web Services, Anthropic, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks. Anthropic says those partners will use Mythos Preview for defensive security work, while more than 40 additional organizations that build or maintain critical infrastructure will receive access to scan and secure first-party and open-source systems.

The story is bigger than one model launch. It is a signal that frontier AI has crossed from code assistance into vulnerability discovery at a level that security teams, enterprises, maintainers, and governments cannot ignore.

What Project Glasswing Is

Project Glasswing is Anthropic’s defensive program for applying Claude Mythos Preview to critical software security. Anthropic says the model will be used by launch partners to find and fix vulnerabilities or weaknesses in foundational systems, with expected work across local vulnerability detection, black-box binary testing, endpoint security, and penetration testing.

Anthropic is committing up to $100 million in usage credits across the initiative. It also says it donated $2.5 million to Alpha-Omega and OpenSSF through the Linux Foundation, plus $1.5 million to the Apache Software Foundation, for a total of $4 million in direct donations to open-source security organizations.

That open-source piece matters because modern software stacks depend heavily on maintainers who often lack large security teams. The Linux Foundation’s supporting post frames Project Glasswing as a way to give maintainers free access to advanced AI cybersecurity tools, reducing the imbalance between well-funded attackers and under-resourced open-source projects.

Why Claude Mythos Preview Is Different

Anthropic describes Claude Mythos Preview as a general-purpose, unreleased frontier model with coding and reasoning capabilities strong enough to reshape cybersecurity. The company says Mythos Preview has already found thousands of high-severity vulnerabilities, including vulnerabilities in every major operating system and web browser.

Anthropic gives three examples that have been patched: a 27-year-old OpenBSD vulnerability, a 16-year-old FFmpeg vulnerability, and a Linux kernel exploit chain that could escalate ordinary user access to full machine control. Anthropic says many other vulnerability details will be revealed after fixes are in place, with cryptographic hashes provided first.

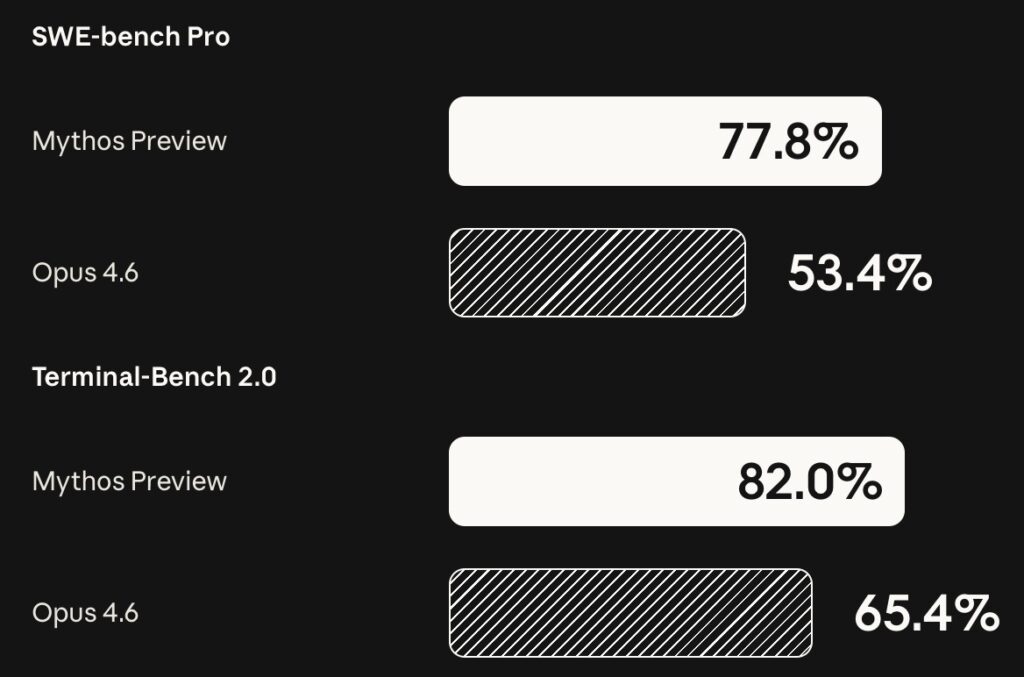

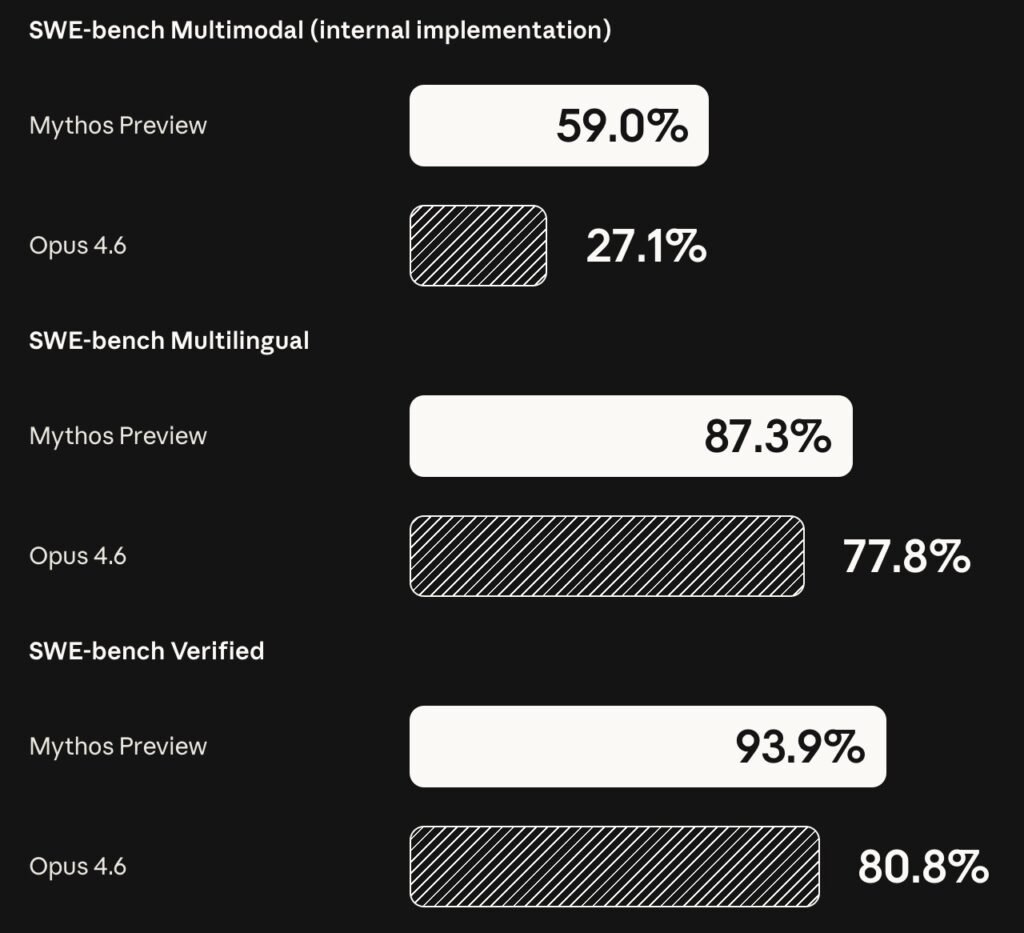

The benchmark gap is also part of the pitch. Anthropic says Mythos Preview scored 83.1% on CyberGym vulnerability reproduction, compared with 66.6% for Claude Opus 4.6. On SWE-bench Verified, Anthropic reports 93.9% for Mythos Preview versus 80.8% for Opus 4.6. Those figures support Anthropic’s claim that stronger coding models are becoming stronger cyber models.

The Defensive Coalition

AWS says it has applied Claude Mythos Preview to critical AWS codebases that already undergo continuous AI-powered security reviews, and that the model helped identify additional opportunities to strengthen code. AWS also says Mythos Preview is available in gated research preview through Amazon Bedrock, with access limited to an initial allow-list of organizations.

Microsoft says early access to Claude Mythos Preview lets it evaluate emerging capabilities, identify and mitigate risk, and strengthen protections for customers. Microsoft also says it evaluated an early snapshot using CTI-REALM, its open-source benchmark for real-world detection engineering tasks, and saw substantial improvements relative to prior models.

CrowdStrike frames the issue around deployment governance. Its supporting post argues that frontier models expand both the attack surface and the defender’s advantage, then places CrowdStrike’s role in visibility, data protection, runtime protection, and enforcement across enterprise endpoints.

The Linux Foundation focuses on maintainers. Its post argues that open source has become the world’s biggest target because it is the dominant form of software consumed by enterprises, while maintainers face more pull requests, bug reports, security pressure, and supply-chain attacks. In that context, AI that can find and patch vulnerabilities could reduce a growing security burden.

The Release Strategy Is Cautious

Anthropic explicitly says it does not plan to make Claude Mythos Preview generally available. That caveat is not a footnote. It is the central safety choice. The company says its eventual goal is to let users safely deploy Mythos-class models at scale, but first it wants safeguards that detect and block the model’s most dangerous outputs.

Anthropic says it plans to launch new safeguards with an upcoming Claude Opus model, not with Mythos Preview itself. That distinction matters: a future safeguard rollout is not the same thing as public access to Mythos-class capabilities.

For security professionals whose legitimate work is affected by those safeguards, Anthropic says they will be able to apply to an upcoming Cyber Verification Program. The company also says Mythos Preview will remain available to Project Glasswing participants through the Claude API, Amazon Bedrock, Google Cloud’s Vertex AI, and Microsoft Foundry after the usage-credit period, priced at $25 per million input tokens and $125 per million output tokens.

The Open-Source Question

Open-source maintainers are a major part of the story, but the source pack requires restraint. The Linux Foundation says Project Glasswing is designed to make advanced AI cybersecurity tooling accessible to maintainers for free. Anthropic also says maintainers interested in access can apply through its Claude for Open Source program.

What is not clear from the source pack is timing. There is no public timeline there for broad open-source maintainer access, and no evidence that Mythos Preview will become generally available. That means the safe reading is narrower: selected maintainers and critical organizations may get access through Anthropic-controlled channels, while wider deployment depends on future safeguards, programs, and eligibility decisions.

Still, the strategic logic is clear. If attackers can use AI to find vulnerabilities faster, maintainers need defensive tools that can keep up. Otherwise the software supply chain becomes a widening gap between the people who depend on open source and the people left to secure it.

What This Does Not Prove Yet

Project Glasswing is an important signal, but not every claim has equal evidentiary weight. Anthropic has disclosed examples of patched vulnerabilities, but the broader claim that Mythos Preview found thousands of zero-days cannot be independently verified from the source pack until more vulnerability details are disclosed after patches.

Partner statements also reflect each company’s own positioning. AWS emphasizes enterprise deployment controls through Bedrock. Microsoft emphasizes vulnerability response and developer tooling. CrowdStrike emphasizes runtime governance and endpoint visibility. The Linux Foundation emphasizes maintainer access and open-source security. Together, they show a serious ecosystem response, but they do not replace independent security review of each vulnerability claim.

This is why the paradox matters. Frontier AI could compress the time between vulnerability discovery and exploitation. It could also compress the time between discovery and patching. Which side benefits more depends on access controls, disclosure discipline, governance, and whether defenders can deploy these tools before attackers normalize similar capabilities.

Why Enterprises Should Pay Attention

For enterprise buyers and technical leaders, the lesson is not simply that Anthropic has a powerful private model. The practical lesson is that vulnerability discovery is becoming more automated, more scalable, and less limited by scarce human expertise. Security programs built around slow, periodic reviews will struggle if attackers and defenders both gain faster AI tools.

That connects directly to Neuronad’s broader coverage of Anthropic’s push for more Claude compute and Claude’s move into high-stakes enterprise workflows. As models become more capable, their security implications become more strategic, not less.

It also fits the wider AI security conversation seen in government AI security efforts and earlier coverage of industry coalitions around AI security. Project Glasswing is more concrete than many safety pledges because it pairs a specific model, named partners, credits, donations, and a defensive deployment plan.

Bottom Line

Project Glasswing is a defensive bet on a dangerous capability. Anthropic is saying, in effect, that vulnerability-finding AI is coming whether or not the industry is ready. Its response is to put Claude Mythos Preview in the hands of selected defenders, critical infrastructure companies, and open-source security organizations before similar capabilities become widely available elsewhere.

The promise is real: faster discovery, faster triage, better patches, and a chance to protect critical software before attackers scale. The uncertainty is also real: Mythos Preview is not generally available, access paths remain controlled, and many vulnerability claims remain unverified outside Anthropic and partners until patches and details are disclosed.

That makes Project Glasswing one of the clearest signs yet that frontier AI safety is no longer only about model behavior in a lab. It is about who gets the most powerful capabilities first, what they are allowed to do with them, and whether defenders can turn the next wave of AI into a shield before attackers turn it into a weapon.